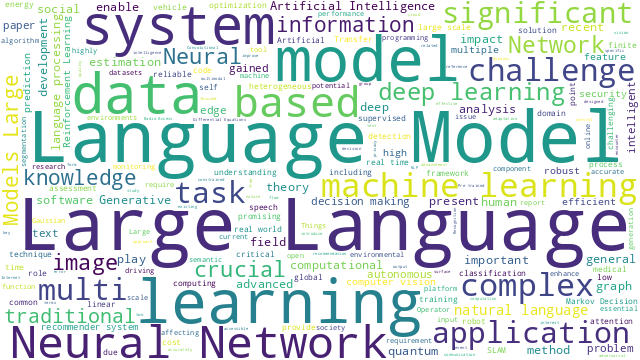

本篇博文主要展示每日从Arxiv论文网站获取的最新论文列表,以自然语言处理、信息检索、计算机视觉等类目进行划分。

+统计

+今日共更新561篇论文,其中:

+

+- 自然语言处理92篇

+- 信息检索8篇

+- 计算机视觉148篇

+

+自然语言处理

+

+ 1. 【2410.08211】LatteCLIP: Unsupervised CLIP Fine-Tuning via LMM-Synthetic Texts

+ 链接:https://arxiv.org/abs/2410.08211

+ 作者:Anh-Quan Cao,Maximilian Jaritz,Matthieu Guillaumin,Raoul de Charette,Loris Bazzani

+ 类目:Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Computation and Language (cs.CL)

+ 关键词:Large-scale vision-language pre-trained, Large-scale vision-language, applied to diverse, diverse applications, fine-tuning VLP models

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large-scale vision-language pre-trained (VLP) models (e.g., CLIP) are renowned for their versatility, as they can be applied to diverse applications in a zero-shot setup. However, when these models are used in specific domains, their performance often falls short due to domain gaps or the under-representation of these domains in the training data. While fine-tuning VLP models on custom datasets with human-annotated labels can address this issue, annotating even a small-scale dataset (e.g., 100k samples) can be an expensive endeavor, often requiring expert annotators if the task is complex. To address these challenges, we propose LatteCLIP, an unsupervised method for fine-tuning CLIP models on classification with known class names in custom domains, without relying on human annotations. Our method leverages Large Multimodal Models (LMMs) to generate expressive textual descriptions for both individual images and groups of images. These provide additional contextual information to guide the fine-tuning process in the custom domains. Since LMM-generated descriptions are prone to hallucination or missing details, we introduce a novel strategy to distill only the useful information and stabilize the training. Specifically, we learn rich per-class prototype representations from noisy generated texts and dual pseudo-labels. Our experiments on 10 domain-specific datasets show that LatteCLIP outperforms pre-trained zero-shot methods by an average improvement of +4.74 points in top-1 accuracy and other state-of-the-art unsupervised methods by +3.45 points.

+

+

+

+ 2. 【2410.08202】Mono-InternVL: Pushing the Boundaries of Monolithic Multimodal Large Language Models with Endogenous Visual Pre-training

+ 链接:https://arxiv.org/abs/2410.08202

+ 作者:Gen Luo,Xue Yang,Wenhan Dou,Zhaokai Wang,Jifeng Dai,Yu Qiao,Xizhou Zhu

+ 类目:Computer Vision and Pattern Recognition (cs.CV); Computation and Language (cs.CL)

+ 关键词:Large Language Models, Multimodal Large Language, Language Models, Large Language, monolithic Multimodal Large

+ 备注:

+

+ 点击查看摘要

+ Abstract:The rapid advancement of Large Language Models (LLMs) has led to an influx of efforts to extend their capabilities to multimodal tasks. Among them, growing attention has been focused on monolithic Multimodal Large Language Models (MLLMs) that integrate visual encoding and language decoding into a single LLM. Despite the structural simplicity and deployment-friendliness, training a monolithic MLLM with promising performance still remains challenging. In particular, the popular approaches adopt continuous pre-training to extend a pre-trained LLM to a monolithic MLLM, which suffers from catastrophic forgetting and leads to performance degeneration. In this paper, we aim to overcome this limitation from the perspective of delta tuning. Specifically, our core idea is to embed visual parameters into a pre-trained LLM, thereby incrementally learning visual knowledge from massive data via delta tuning, i.e., freezing the LLM when optimizing the visual parameters. Based on this principle, we present Mono-InternVL, a novel monolithic MLLM that seamlessly integrates a set of visual experts via a multimodal mixture-of-experts structure. Moreover, we propose an innovative pre-training strategy to maximize the visual capability of Mono-InternVL, namely Endogenous Visual Pre-training (EViP). In particular, EViP is designed as a progressive learning process for visual experts, which aims to fully exploit the visual knowledge from noisy data to high-quality data. To validate our approach, we conduct extensive experiments on 16 benchmarks. Experimental results not only validate the superior performance of Mono-InternVL compared to the state-of-the-art MLLM on 6 multimodal benchmarks, e.g., +113 points over InternVL-1.5 on OCRBench, but also confirm its better deployment efficiency, with first token latency reduced by up to 67%.

+

+

+

+ 3. 【2410.08197】From Exploration to Mastery: Enabling LLMs to Master Tools via Self-Driven Interactions

+ 链接:https://arxiv.org/abs/2410.08197

+ 作者:Changle Qu,Sunhao Dai,Xiaochi Wei,Hengyi Cai,Shuaiqiang Wang,Dawei Yin,Jun Xu,Ji-Rong Wen

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:Large Language Models, enables Large Language, Language Models, Large Language, learning enables Large

+ 备注:

+

+ 点击查看摘要

+ Abstract:Tool learning enables Large Language Models (LLMs) to interact with external environments by invoking tools, serving as an effective strategy to mitigate the limitations inherent in their pre-training data. In this process, tool documentation plays a crucial role by providing usage instructions for LLMs, thereby facilitating effective tool utilization. This paper concentrates on the critical challenge of bridging the comprehension gap between LLMs and external tools due to the inadequacies and inaccuracies inherent in existing human-centric tool documentation. We propose a novel framework, DRAFT, aimed at Dynamically Refining tool documentation through the Analysis of Feedback and Trails emanating from LLMs' interactions with external tools. This methodology pivots on an innovative trial-and-error approach, consisting of three distinct learning phases: experience gathering, learning from experience, and documentation rewriting, to iteratively enhance the tool documentation. This process is further optimized by implementing a diversity-promoting exploration strategy to ensure explorative diversity and a tool-adaptive termination mechanism to prevent overfitting while enhancing efficiency. Extensive experiments on multiple datasets demonstrate that DRAFT's iterative, feedback-based refinement significantly ameliorates documentation quality, fostering a deeper comprehension and more effective utilization of tools by LLMs. Notably, our analysis reveals that the tool documentation refined via our approach demonstrates robust cross-model generalization capabilities.

+

+

+

+ 4. 【2410.08196】MathCoder2: Better Math Reasoning from Continued Pretraining on Model-translated Mathematical Code

+ 链接:https://arxiv.org/abs/2410.08196

+ 作者:Zimu Lu,Aojun Zhou,Ke Wang,Houxing Ren,Weikang Shi,Junting Pan,Mingjie Zhan,Hongsheng Li

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Computer Vision and Pattern Recognition (cs.CV)

+ 关键词:Code, mathematical, precision and accuracy, reasoning, mathematical reasoning

+ 备注: [this https URL](https://github.com/mathllm/MathCoder2)

+

+ 点击查看摘要

+ Abstract:Code has been shown to be effective in enhancing the mathematical reasoning abilities of large language models due to its precision and accuracy. Previous works involving continued mathematical pretraining often include code that utilizes math-related packages, which are primarily designed for fields such as engineering, machine learning, signal processing, or module testing, rather than being directly focused on mathematical reasoning. In this paper, we introduce a novel method for generating mathematical code accompanied with corresponding reasoning steps for continued pretraining. Our approach begins with the construction of a high-quality mathematical continued pretraining dataset by incorporating math-related web data, code using mathematical packages, math textbooks, and synthetic data. Next, we construct reasoning steps by extracting LaTeX expressions, the conditions needed for the expressions, and the results of the expressions from the previously collected dataset. Based on this extracted information, we generate corresponding code to accurately capture the mathematical reasoning process. Appending the generated code to each reasoning step results in data consisting of paired natural language reasoning steps and their corresponding code. Combining this data with the original dataset results in a 19.2B-token high-performing mathematical pretraining corpus, which we name MathCode-Pile. Training several popular base models with this corpus significantly improves their mathematical abilities, leading to the creation of the MathCoder2 family of models. All of our data processing and training code is open-sourced, ensuring full transparency and easy reproducibility of the entire data collection and training pipeline. The code is released at this https URL .

+

+

+

+ 5. 【2410.08193】GenARM: Reward Guided Generation with Autoregressive Reward Model for Test-time Alignment

+ 链接:https://arxiv.org/abs/2410.08193

+ 作者:Yuancheng Xu,Udari Madhushani Sehwag,Alec Koppel,Sicheng Zhu,Bang An,Furong Huang,Sumitra Ganesh

+ 类目:Computation and Language (cs.CL)

+ 关键词:Large Language Models, Large Language, exhibit impressive capabilities, Language Models, exhibit impressive

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs) exhibit impressive capabilities but require careful alignment with human preferences. Traditional training-time methods finetune LLMs using human preference datasets but incur significant training costs and require repeated training to handle diverse user preferences. Test-time alignment methods address this by using reward models (RMs) to guide frozen LLMs without retraining. However, existing test-time approaches rely on trajectory-level RMs which are designed to evaluate complete responses, making them unsuitable for autoregressive text generation that requires computing next-token rewards from partial responses. To address this, we introduce GenARM, a test-time alignment approach that leverages the Autoregressive Reward Model--a novel reward parametrization designed to predict next-token rewards for efficient and effective autoregressive generation. Theoretically, we demonstrate that this parametrization can provably guide frozen LLMs toward any distribution achievable by traditional RMs within the KL-regularized reinforcement learning framework. Experimental results show that GenARM significantly outperforms prior test-time alignment baselines and matches the performance of training-time methods. Additionally, GenARM enables efficient weak-to-strong guidance, aligning larger LLMs with smaller RMs without the high costs of training larger models. Furthermore, GenARM supports multi-objective alignment, allowing real-time trade-offs between preference dimensions and catering to diverse user preferences without retraining.

+

+

+

+ 6. 【2410.08182】MRAG-Bench: Vision-Centric Evaluation for Retrieval-Augmented Multimodal Models

+ 链接:https://arxiv.org/abs/2410.08182

+ 作者:Wenbo Hu,Jia-Chen Gu,Zi-Yi Dou,Mohsen Fayyaz,Pan Lu,Kai-Wei Chang,Nanyun Peng

+ 类目:Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Computation and Language (cs.CL)

+ 关键词:Existing multimodal retrieval, retrieval benchmarks primarily, benchmarks primarily focus, Existing multimodal, primarily focus

+ 备注: [this https URL](https://mragbench.github.io)

+

+ 点击查看摘要

+ Abstract:Existing multimodal retrieval benchmarks primarily focus on evaluating whether models can retrieve and utilize external textual knowledge for question answering. However, there are scenarios where retrieving visual information is either more beneficial or easier to access than textual data. In this paper, we introduce a multimodal retrieval-augmented generation benchmark, MRAG-Bench, in which we systematically identify and categorize scenarios where visually augmented knowledge is better than textual knowledge, for instance, more images from varying viewpoints. MRAG-Bench consists of 16,130 images and 1,353 human-annotated multiple-choice questions across 9 distinct scenarios. With MRAG-Bench, we conduct an evaluation of 10 open-source and 4 proprietary large vision-language models (LVLMs). Our results show that all LVLMs exhibit greater improvements when augmented with images compared to textual knowledge, confirming that MRAG-Bench is vision-centric. Additionally, we conduct extensive analysis with MRAG-Bench, which offers valuable insights into retrieval-augmented LVLMs. Notably, the top-performing model, GPT-4o, faces challenges in effectively leveraging retrieved knowledge, achieving only a 5.82% improvement with ground-truth information, in contrast to a 33.16% improvement observed in human participants. These findings highlight the importance of MRAG-Bench in encouraging the community to enhance LVLMs' ability to utilize retrieved visual knowledge more effectively.

+

+

+

+ 7. 【2410.08174】Sample then Identify: A General Framework for Risk Control and Assessment in Multimodal Large Language Models

+ 链接:https://arxiv.org/abs/2410.08174

+ 作者:Qingni Wang,Tiantian Geng,Zhiyuan Wang,Teng Wang,Bo Fu,Feng Zheng

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Machine Learning (cs.LG); Multimedia (cs.MM)

+ 关键词:Multimodal Large Language, Multimodal Large, Large Language Models, significant trustworthiness issues, encounter significant trustworthiness

+ 备注: 15 pages, 6 figures

+

+ 点击查看摘要

+ Abstract:Multimodal Large Language Models (MLLMs) exhibit promising advancements across various tasks, yet they still encounter significant trustworthiness issues. Prior studies apply Split Conformal Prediction (SCP) in language modeling to construct prediction sets with statistical guarantees. However, these methods typically rely on internal model logits or are restricted to multiple-choice settings, which hampers their generalizability and adaptability in dynamic, open-ended environments. In this paper, we introduce TRON, a two-step framework for risk control and assessment, applicable to any MLLM that supports sampling in both open-ended and closed-ended scenarios. TRON comprises two main components: (1) a novel conformal score to sample response sets of minimum size, and (2) a nonconformity score to identify high-quality responses based on self-consistency theory, controlling the error rates by two specific risk levels. Furthermore, we investigate semantic redundancy in prediction sets within open-ended contexts for the first time, leading to a promising evaluation metric for MLLMs based on average set size. Our comprehensive experiments across four Video Question-Answering (VideoQA) datasets utilizing eight MLLMs show that TRON achieves desired error rates bounded by two user-specified risk levels. Additionally, deduplicated prediction sets maintain adaptiveness while being more efficient and stable for risk assessment under different risk levels.

+

+

+

+ 8. 【2410.08164】Agent S: An Open Agentic Framework that Uses Computers Like a Human

+ 链接:https://arxiv.org/abs/2410.08164

+ 作者:Saaket Agashe,Jiuzhou Han,Shuyu Gan,Jiachen Yang,Ang Li,Xin Eric Wang

+ 类目:Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

+ 关键词:Graphical User Interface, Graphical User, enables autonomous interaction, transforming human-computer interaction, open agentic framework

+ 备注: 23 pages, 16 figures, 9 tables

+

+ 点击查看摘要

+ Abstract:We present Agent S, an open agentic framework that enables autonomous interaction with computers through a Graphical User Interface (GUI), aimed at transforming human-computer interaction by automating complex, multi-step tasks. Agent S aims to address three key challenges in automating computer tasks: acquiring domain-specific knowledge, planning over long task horizons, and handling dynamic, non-uniform interfaces. To this end, Agent S introduces experience-augmented hierarchical planning, which learns from external knowledge search and internal experience retrieval at multiple levels, facilitating efficient task planning and subtask execution. In addition, it employs an Agent-Computer Interface (ACI) to better elicit the reasoning and control capabilities of GUI agents based on Multimodal Large Language Models (MLLMs). Evaluation on the OSWorld benchmark shows that Agent S outperforms the baseline by 9.37% on success rate (an 83.6% relative improvement) and achieves a new state-of-the-art. Comprehensive analysis highlights the effectiveness of individual components and provides insights for future improvements. Furthermore, Agent S demonstrates broad generalizability to different operating systems on a newly-released WindowsAgentArena benchmark. Code available at this https URL.

+

+

+

+ 9. 【2410.08162】he Effect of Surprisal on Reading Times in Information Seeking and Repeated Reading

+ 链接:https://arxiv.org/abs/2410.08162

+ 作者:Keren Gruteke Klein,Yoav Meiri,Omer Shubi,Yevgeni Berzak

+ 类目:Computation and Language (cs.CL)

+ 关键词:investigation in psycholinguistics, central topic, topic of investigation, processing, surprisal

+ 备注: Accepted to CoNLL

+

+ 点击查看摘要

+ Abstract:The effect of surprisal on processing difficulty has been a central topic of investigation in psycholinguistics. Here, we use eyetracking data to examine three language processing regimes that are common in daily life but have not been addressed with respect to this question: information seeking, repeated processing, and the combination of the two. Using standard regime-agnostic surprisal estimates we find that the prediction of surprisal theory regarding the presence of a linear effect of surprisal on processing times, extends to these regimes. However, when using surprisal estimates from regime-specific contexts that match the contexts and tasks given to humans, we find that in information seeking, such estimates do not improve the predictive power of processing times compared to standard surprisals. Further, regime-specific contexts yield near zero surprisal estimates with no predictive power for processing times in repeated reading. These findings point to misalignments of task and memory representations between humans and current language models, and question the extent to which such models can be used for estimating cognitively relevant quantities. We further discuss theoretical challenges posed by these results.

+

+

+

+ 10. 【2410.08146】Rewarding Progress: Scaling Automated Process Verifiers for LLM Reasoning

+ 链接:https://arxiv.org/abs/2410.08146

+ 作者:Amrith Setlur,Chirag Nagpal,Adam Fisch,Xinyang Geng,Jacob Eisenstein,Rishabh Agarwal,Alekh Agarwal,Jonathan Berant,Aviral Kumar

+ 类目:Machine Learning (cs.LG); Computation and Language (cs.CL)

+ 关键词:large language models, promising approach, large language, reward models, language models

+ 备注:

+

+ 点击查看摘要

+ Abstract:A promising approach for improving reasoning in large language models is to use process reward models (PRMs). PRMs provide feedback at each step of a multi-step reasoning trace, potentially improving credit assignment over outcome reward models (ORMs) that only provide feedback at the final step. However, collecting dense, per-step human labels is not scalable, and training PRMs from automatically-labeled data has thus far led to limited gains. To improve a base policy by running search against a PRM or using it as dense rewards for reinforcement learning (RL), we ask: "How should we design process rewards?". Our key insight is that, to be effective, the process reward for a step should measure progress: a change in the likelihood of producing a correct response in the future, before and after taking the step, corresponding to the notion of step-level advantages in RL. Crucially, this progress should be measured under a prover policy distinct from the base policy. We theoretically characterize the set of good provers and our results show that optimizing process rewards from such provers improves exploration during test-time search and online RL. In fact, our characterization shows that weak prover policies can substantially improve a stronger base policy, which we also observe empirically. We validate our claims by training process advantage verifiers (PAVs) to predict progress under such provers, and show that compared to ORMs, test-time search against PAVs is $8\%$ more accurate, and $1.5-5\times$ more compute-efficient. Online RL with dense rewards from PAVs enables one of the first results with $5-6\times$ gain in sample efficiency, and $6\%$ gain in accuracy, over ORMs.

+

+

+

+ 11. 【2410.08145】Insight Over Sight? Exploring the Vision-Knowledge Conflicts in Multimodal LLMs

+ 链接:https://arxiv.org/abs/2410.08145

+ 作者:Xiaoyuan Liu,Wenxuan Wang,Youliang Yuan,Jen-tse Huang,Qiuzhi Liu,Pinjia He,Zhaopeng Tu

+ 类目:Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

+ 关键词:Multimodal Large Language, Large Language Models, Multimodal Large, Large Language, contradicts model internal

+ 备注:

+

+ 点击查看摘要

+ Abstract:This paper explores the problem of commonsense-level vision-knowledge conflict in Multimodal Large Language Models (MLLMs), where visual information contradicts model's internal commonsense knowledge (see Figure 1). To study this issue, we introduce an automated pipeline, augmented with human-in-the-loop quality control, to establish a benchmark aimed at simulating and assessing the conflicts in MLLMs. Utilizing this pipeline, we have crafted a diagnostic benchmark comprising 374 original images and 1,122 high-quality question-answer (QA) pairs. This benchmark covers two types of conflict target and three question difficulty levels, providing a thorough assessment tool. Through this benchmark, we evaluate the conflict-resolution capabilities of nine representative MLLMs across various model families and find a noticeable over-reliance on textual queries. Drawing on these findings, we propose a novel prompting strategy, "Focus-on-Vision" (FoV), which markedly enhances MLLMs' ability to favor visual data over conflicting textual knowledge. Our detailed analysis and the newly proposed strategy significantly advance the understanding and mitigating of vision-knowledge conflicts in MLLMs. The data and code are made publicly available.

+

+

+

+ 12. 【2410.08143】DelTA: An Online Document-Level Translation Agent Based on Multi-Level Memory

+ 链接:https://arxiv.org/abs/2410.08143

+ 作者:Yutong Wang,Jiali Zeng,Xuebo Liu,Derek F. Wong,Fandong Meng,Jie Zhou,Min Zhang

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:Large language models, Large language, reasonable quality improvements, achieved reasonable quality, language models

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large language models (LLMs) have achieved reasonable quality improvements in machine translation (MT). However, most current research on MT-LLMs still faces significant challenges in maintaining translation consistency and accuracy when processing entire documents. In this paper, we introduce DelTA, a Document-levEL Translation Agent designed to overcome these limitations. DelTA features a multi-level memory structure that stores information across various granularities and spans, including Proper Noun Records, Bilingual Summary, Long-Term Memory, and Short-Term Memory, which are continuously retrieved and updated by auxiliary LLM-based components. Experimental results indicate that DelTA significantly outperforms strong baselines in terms of translation consistency and quality across four open/closed-source LLMs and two representative document translation datasets, achieving an increase in consistency scores by up to 4.58 percentage points and in COMET scores by up to 3.16 points on average. DelTA employs a sentence-by-sentence translation strategy, ensuring no sentence omissions and offering a memory-efficient solution compared to the mainstream method. Furthermore, DelTA improves pronoun translation accuracy, and the summary component of the agent also shows promise as a tool for query-based summarization tasks. We release our code and data at this https URL.

+

+

+

+ 13. 【2410.08133】Assessing Episodic Memory in LLMs with Sequence Order Recall Tasks

+ 链接:https://arxiv.org/abs/2410.08133

+ 作者:Mathis Pink,Vy A. Vo,Qinyuan Wu,Jianing Mu,Javier S. Turek,Uri Hasson,Kenneth A. Norman,Sebastian Michelmann,Alexander Huth,Mariya Toneva

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Machine Learning (cs.LG)

+ 关键词:primarily assessing semantic, Current LLM benchmarks, Current LLM, assessing semantic aspects, semantic relations

+ 备注:

+

+ 点击查看摘要

+ Abstract:Current LLM benchmarks focus on evaluating models' memory of facts and semantic relations, primarily assessing semantic aspects of long-term memory. However, in humans, long-term memory also includes episodic memory, which links memories to their contexts, such as the time and place they occurred. The ability to contextualize memories is crucial for many cognitive tasks and everyday functions. This form of memory has not been evaluated in LLMs with existing benchmarks. To address the gap in evaluating memory in LLMs, we introduce Sequence Order Recall Tasks (SORT), which we adapt from tasks used to study episodic memory in cognitive psychology. SORT requires LLMs to recall the correct order of text segments, and provides a general framework that is both easily extendable and does not require any additional annotations. We present an initial evaluation dataset, Book-SORT, comprising 36k pairs of segments extracted from 9 books recently added to the public domain. Based on a human experiment with 155 participants, we show that humans can recall sequence order based on long-term memory of a book. We find that models can perform the task with high accuracy when relevant text is given in-context during the SORT evaluation. However, when presented with the book text only during training, LLMs' performance on SORT falls short. By allowing to evaluate more aspects of memory, we believe that SORT will aid in the emerging development of memory-augmented models.

+

+

+

+ 14. 【2410.08130】hink Beyond Size: Dynamic Prompting for More Effective Reasoning

+ 链接:https://arxiv.org/abs/2410.08130

+ 作者:Kamesh R

+ 类目:Machine Learning (cs.LG); Computation and Language (cs.CL)

+ 关键词:Large Language Models, Large Language, paper presents Dynamic, presents Dynamic Prompting, capabilities of Large

+ 备注: Submitted to ICLR 2025. This is a preprint version. Future revisions will include additional evaluations and refinements

+

+ 点击查看摘要

+ Abstract:This paper presents Dynamic Prompting, a novel framework aimed at improving the reasoning capabilities of Large Language Models (LLMs). In contrast to conventional static prompting methods, Dynamic Prompting enables the adaptive modification of prompt sequences and step counts based on real-time task complexity and model performance. This dynamic adaptation facilitates more efficient problem-solving, particularly in smaller models, by reducing hallucinations and repetitive cycles. Our empirical evaluations demonstrate that Dynamic Prompting allows smaller LLMs to perform competitively with much larger models, thereby challenging the conventional emphasis on model size as the primary determinant of reasoning efficacy.

+

+

+

+ 15. 【2410.08126】Mars: Situated Inductive Reasoning in an Open-World Environment

+ 链接:https://arxiv.org/abs/2410.08126

+ 作者:Xiaojuan Tang,Jiaqi Li,Yitao Liang,Song-chun Zhu,Muhan Zhang,Zilong Zheng

+ 类目:Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL)

+ 关键词:Large Language Models, Large Language, Language Models, shown remarkable success, inductive reasoning

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs) trained on massive corpora have shown remarkable success in knowledge-intensive tasks. Yet, most of them rely on pre-stored knowledge. Inducing new general knowledge from a specific environment and performing reasoning with the acquired knowledge -- \textit{situated inductive reasoning}, is crucial and challenging for machine intelligence. In this paper, we design Mars, an interactive environment devised for situated inductive reasoning. It introduces counter-commonsense game mechanisms by modifying terrain, survival setting and task dependency while adhering to certain principles. In Mars, agents need to actively interact with their surroundings, derive useful rules and perform decision-making tasks in specific contexts. We conduct experiments on various RL-based and LLM-based methods, finding that they all struggle on this challenging situated inductive reasoning benchmark. Furthermore, we explore \textit{Induction from Reflection}, where we instruct agents to perform inductive reasoning from history trajectory. The superior performance underscores the importance of inductive reasoning in Mars. Through Mars, we aim to galvanize advancements in situated inductive reasoning and set the stage for developing the next generation of AI systems that can reason in an adaptive and context-sensitive way.

+

+

+

+ 16. 【2410.08115】Optima: Optimizing Effectiveness and Efficiency for LLM-Based Multi-Agent System

+ 链接:https://arxiv.org/abs/2410.08115

+ 作者:Weize Chen,Jiarui Yuan,Chen Qian,Cheng Yang,Zhiyuan Liu,Maosong Sun

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:Large Language Model, Large Language, Language Model, parameter-updating optimization methods, low communication efficiency

+ 备注: Under review

+

+ 点击查看摘要

+ Abstract:Large Language Model (LLM) based multi-agent systems (MAS) show remarkable potential in collaborative problem-solving, yet they still face critical challenges: low communication efficiency, poor scalability, and a lack of effective parameter-updating optimization methods. We present Optima, a novel framework that addresses these issues by significantly enhancing both communication efficiency and task effectiveness in LLM-based MAS through LLM training. Optima employs an iterative generate, rank, select, and train paradigm with a reward function balancing task performance, token efficiency, and communication readability. We explore various RL algorithms, including Supervised Fine-Tuning, Direct Preference Optimization, and their hybrid approaches, providing insights into their effectiveness-efficiency trade-offs. We integrate Monte Carlo Tree Search-inspired techniques for DPO data generation, treating conversation turns as tree nodes to explore diverse interaction paths. Evaluated on common multi-agent tasks, including information-asymmetric question answering and complex reasoning, Optima shows consistent and substantial improvements over single-agent baselines and vanilla MAS based on Llama 3 8B, achieving up to 2.8x performance gain with less than 10\% tokens on tasks requiring heavy information exchange. Moreover, Optima's efficiency gains open new possibilities for leveraging inference-compute more effectively, leading to improved inference-time scaling laws. By addressing fundamental challenges in LLM-based MAS, Optima shows the potential towards scalable, efficient, and effective MAS (this https URL).

+

+

+

+ 17. 【2410.08113】Robust AI-Generated Text Detection by Restricted Embeddings

+ 链接:https://arxiv.org/abs/2410.08113

+ 作者:Kristian Kuznetsov,Eduard Tulchinskii,Laida Kushnareva,German Magai,Serguei Barannikov,Sergey Nikolenko,Irina Piontkovskaya

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:texts makes detecting, Growing amount, AI-generated texts makes, content more difficult, amount and quality

+ 备注: Accepted to Findings of EMNLP 2024

+

+ 点击查看摘要

+ Abstract:Growing amount and quality of AI-generated texts makes detecting such content more difficult. In most real-world scenarios, the domain (style and topic) of generated data and the generator model are not known in advance. In this work, we focus on the robustness of classifier-based detectors of AI-generated text, namely their ability to transfer to unseen generators or semantic domains. We investigate the geometry of the embedding space of Transformer-based text encoders and show that clearing out harmful linear subspaces helps to train a robust classifier, ignoring domain-specific spurious features. We investigate several subspace decomposition and feature selection strategies and achieve significant improvements over state of the art methods in cross-domain and cross-generator transfer. Our best approaches for head-wise and coordinate-based subspace removal increase the mean out-of-distribution (OOD) classification score by up to 9% and 14% in particular setups for RoBERTa and BERT embeddings respectively. We release our code and data: this https URL

+

+

+

+ 18. 【2410.08109】A Closer Look at Machine Unlearning for Large Language Models

+ 链接:https://arxiv.org/abs/2410.08109

+ 作者:Xiaojian Yuan,Tianyu Pang,Chao Du,Kejiang Chen,Weiming Zhang,Min Lin

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Machine Learning (cs.LG)

+ 关键词:Large language models, Large language, raising privacy, legal concerns, memorize sensitive

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large language models (LLMs) may memorize sensitive or copyrighted content, raising privacy and legal concerns. Due to the high cost of retraining from scratch, researchers attempt to employ machine unlearning to remove specific content from LLMs while preserving the overall performance. In this paper, we discuss several issues in machine unlearning for LLMs and provide our insights on possible approaches. To address the issue of inadequate evaluation of model outputs after unlearning, we introduce three additional metrics to evaluate token diversity, sentence semantics, and factual correctness. We then categorize unlearning methods into untargeted and targeted, and discuss their issues respectively. Specifically, the behavior that untargeted unlearning attempts to approximate is unpredictable and may involve hallucinations, and existing regularization is insufficient for targeted unlearning. To alleviate these issues, we propose using the objective of maximizing entropy (ME) for untargeted unlearning and incorporate answer preservation (AP) loss as regularization for targeted unlearning. Experimental results across three scenarios, i.e., fictitious unlearning, continual unlearning, and real-world unlearning, demonstrate the effectiveness of our approaches. The code is available at this https URL.

+

+

+

+ 19. 【2410.08105】What Makes Large Language Models Reason in (Multi-Turn) Code Generation?

+ 链接:https://arxiv.org/abs/2410.08105

+ 作者:Kunhao Zheng,Juliette Decugis,Jonas Gehring,Taco Cohen,Benjamin Negrevergne,Gabriel Synnaeve

+ 类目:Computation and Language (cs.CL)

+ 关键词:popular vehicle, vehicle for improving, improving the outputs, large language models, Prompting techniques

+ 备注:

+

+ 点击查看摘要

+ Abstract:Prompting techniques such as chain-of-thought have established themselves as a popular vehicle for improving the outputs of large language models (LLMs). For code generation, however, their exact mechanics and efficacy are under-explored. We thus investigate the effects of a wide range of prompting strategies with a focus on automatic re-prompting over multiple turns and computational requirements. After systematically decomposing reasoning, instruction, and execution feedback prompts, we conduct an extensive grid search on the competitive programming benchmarks CodeContests and TACO for multiple LLM families and sizes (Llama 3.0 and 3.1, 8B, 70B, 405B, and GPT-4o). Our study reveals strategies that consistently improve performance across all models with small and large sampling budgets. We then show how finetuning with such an optimal configuration allows models to internalize the induced reasoning process and obtain improvements in performance and scalability for multi-turn code generation.

+

+

+

+ 20. 【2410.08102】Multi-Agent Collaborative Data Selection for Efficient LLM Pretraining

+ 链接:https://arxiv.org/abs/2410.08102

+ 作者:Tianyi Bai,Ling Yang,Zhen Hao Wong,Jiahui Peng,Xinlin Zhuang,Chi Zhang,Lijun Wu,Qiu Jiantao,Wentao Zhang,Binhang Yuan,Conghui He

+ 类目:Computation and Language (cs.CL)

+ 关键词:Efficient data selection, Efficient data, data selection, data, Efficient

+ 备注:

+

+ 点击查看摘要

+ Abstract:Efficient data selection is crucial to accelerate the pretraining of large language models (LLMs). While various methods have been proposed to enhance data efficiency, limited research has addressed the inherent conflicts between these approaches to achieve optimal data selection for LLM pretraining. To tackle this problem, we propose a novel multi-agent collaborative data selection mechanism. In this framework, each data selection method serves as an independent agent, and an agent console is designed to dynamically integrate the information from all agents throughout the LLM training process. We conduct extensive empirical studies to evaluate our multi-agent framework. The experimental results demonstrate that our approach significantly improves data efficiency, accelerates convergence in LLM training, and achieves an average performance gain of 10.5% across multiple language model benchmarks compared to the state-of-the-art methods.

+

+

+

+ 21. 【2410.08085】Can Knowledge Graphs Make Large Language Models More Trustworthy? An Empirical Study over Open-ended Question Answering

+ 链接:https://arxiv.org/abs/2410.08085

+ 作者:Yuan Sui,Bryan Hooi

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:Large Language Models, integrating Knowledge Graphs, Knowledge Graphs, Large Language, Recent works integrating

+ 备注: Work in progress

+

+ 点击查看摘要

+ Abstract:Recent works integrating Knowledge Graphs (KGs) have led to promising improvements in enhancing reasoning accuracy of Large Language Models (LLMs). However, current benchmarks mainly focus on closed tasks, leaving a gap in the assessment of more complex, real-world scenarios. This gap has also obscured the evaluation of KGs' potential to mitigate the problem of hallucination in LLMs. To fill the gap, we introduce OKGQA, a new benchmark specifically designed to assess LLMs enhanced with KGs under open-ended, real-world question answering scenarios. OKGQA is designed to closely reflect the complexities of practical applications using questions from different types, and incorporates specific metrics to measure both the reduction in hallucinations and the enhancement in reasoning capabilities. To consider the scenario in which KGs may have varying levels of mistakes, we further propose another experiment setting OKGQA-P to assess model performance when the semantics and structure of KGs are deliberately perturbed and contaminated. OKGQA aims to (1) explore whether KGs can make LLMs more trustworthy in an open-ended setting, and (2) conduct a comparative analysis to shed light on methods and future directions for leveraging KGs to reduce LLMs' hallucination. We believe that this study can facilitate a more complete performance comparison and encourage continuous improvement in integrating KGs with LLMs.

+

+

+

+ 22. 【2410.08081】Packing Analysis: Packing Is More Appropriate for Large Models or Datasets in Supervised Fine-tuning

+ 链接:https://arxiv.org/abs/2410.08081

+ 作者:Shuhe Wang,Guoyin Wang,Jiwei Li,Eduard Hovy,Chen Guo

+ 类目:Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL)

+ 关键词:maximum input length, optimization technique designed, maximize hardware resource, model maximum input, hardware resource efficiency

+ 备注:

+

+ 点击查看摘要

+ Abstract:Packing, initially utilized in the pre-training phase, is an optimization technique designed to maximize hardware resource efficiency by combining different training sequences to fit the model's maximum input length. Although it has demonstrated effectiveness during pre-training, there remains a lack of comprehensive analysis for the supervised fine-tuning (SFT) stage on the following points: (1) whether packing can effectively enhance training efficiency while maintaining performance, (2) the suitable size of the model and dataset for fine-tuning with the packing method, and (3) whether packing unrelated or related training samples might cause the model to either excessively disregard or over-rely on the context.

+In this paper, we perform extensive comparisons between SFT methods using padding and packing, covering SFT datasets ranging from 69K to 1.2M and models from 8B to 70B. This provides the first comprehensive analysis of the advantages and limitations of packing versus padding, as well as practical considerations for implementing packing in various training scenarios. Our analysis covers various benchmarks, including knowledge, reasoning, and coding, as well as GPT-based evaluations, time efficiency, and other fine-tuning parameters. We also open-source our code for fine-tuning and evaluation and provide checkpoints fine-tuned on datasets of different sizes, aiming to advance future research on packing methods. Code is available at: this https URL.

+

Subjects:

+Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL)

+Cite as:

+arXiv:2410.08081 [cs.LG]

+(or

+arXiv:2410.08081v1 [cs.LG] for this version)

+https://doi.org/10.48550/arXiv.2410.08081

+Focus to learn more

+ arXiv-issued DOI via DataCite (pending registration)</p>

+

+

+

+

+ 23. 【2410.08068】aching-Inspired Integrated Prompting Framework: A Novel Approach for Enhancing Reasoning in Large Language Models

+ 链接:https://arxiv.org/abs/2410.08068

+ 作者:Wenting Tan,Dongxiao Chen,Jieting Xue,Zihao Wang,Taijie Chen

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

+ 关键词:Large Language Models, Large Language, Language Models, exhibit impressive performance, arithmetic reasoning tasks

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs) exhibit impressive performance across various domains but still struggle with arithmetic reasoning tasks. Recent work shows the effectiveness of prompt design methods in enhancing reasoning capabilities. However, these approaches overlook crucial requirements for prior knowledge of specific concepts, theorems, and tricks to tackle most arithmetic reasoning problems successfully. To address this issue, we propose a novel and effective Teaching-Inspired Integrated Framework, which emulates the instructional process of a teacher guiding students. This method equips LLMs with essential concepts, relevant theorems, and similar problems with analogous solution approaches, facilitating the enhancement of reasoning abilities. Additionally, we introduce two new Chinese datasets, MathMC and MathToF, both with detailed explanations and answers. Experiments are conducted on nine benchmarks which demonstrates that our approach improves the reasoning accuracy of LLMs. With GPT-4 and our framework, we achieve new state-of-the-art performance on four math benchmarks (AddSub, SVAMP, Math23K and AQuA) with accuracies of 98.2% (+3.3%), 93.9% (+0.2%), 94.3% (+7.2%) and 81.1% (+1.2%). Our data and code are available at this https URL.

+

+

+

+ 24. 【2410.08058】Closing the Loop: Learning to Generate Writing Feedback via Language Model Simulated Student Revisions

+ 链接:https://arxiv.org/abs/2410.08058

+ 作者:Inderjeet Nair,Jiaye Tan,Xiaotian Su,Anne Gere,Xu Wang,Lu Wang

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Machine Learning (cs.LG)

+ 关键词:Providing feedback, widely recognized, recognized as crucial, crucial for refining, students' writing skills

+ 备注: Accepted to EMNLP 2024

+

+ 点击查看摘要

+ Abstract:Providing feedback is widely recognized as crucial for refining students' writing skills. Recent advances in language models (LMs) have made it possible to automatically generate feedback that is actionable and well-aligned with human-specified attributes. However, it remains unclear whether the feedback generated by these models is truly effective in enhancing the quality of student revisions. Moreover, prompting LMs with a precise set of instructions to generate feedback is nontrivial due to the lack of consensus regarding the specific attributes that can lead to improved revising performance. To address these challenges, we propose PROF that PROduces Feedback via learning from LM simulated student revisions. PROF aims to iteratively optimize the feedback generator by directly maximizing the effectiveness of students' overall revising performance as simulated by LMs. Focusing on an economic essay assignment, we empirically test the efficacy of PROF and observe that our approach not only surpasses a variety of baseline methods in effectiveness of improving students' writing but also demonstrates enhanced pedagogical values, even though it was not explicitly trained for this aspect.

+

+

+

+ 25. 【2410.08053】A Target-Aware Analysis of Data Augmentation for Hate Speech Detection

+ 链接:https://arxiv.org/abs/2410.08053

+ 作者:Camilla Casula,Sara Tonelli

+ 类目:Computation and Language (cs.CL)

+ 关键词:main threats posed, Hate speech, hate speech detection, Measuring Hate Speech, social networks

+ 备注:

+

+ 点击查看摘要

+ Abstract:Hate speech is one of the main threats posed by the widespread use of social networks, despite efforts to limit it. Although attention has been devoted to this issue, the lack of datasets and case studies centered around scarcely represented phenomena, such as ableism or ageism, can lead to hate speech detection systems that do not perform well on underrepresented identity groups. Given the unpreceded capabilities of LLMs in producing high-quality data, we investigate the possibility of augmenting existing data with generative language models, reducing target imbalance. We experiment with augmenting 1,000 posts from the Measuring Hate Speech corpus, an English dataset annotated with target identity information, adding around 30,000 synthetic examples using both simple data augmentation methods and different types of generative models, comparing autoregressive and sequence-to-sequence approaches. We find traditional DA methods to often be preferable to generative models, but the combination of the two tends to lead to the best results. Indeed, for some hate categories such as origin, religion, and disability, hate speech classification using augmented data for training improves by more than 10% F1 over the no augmentation baseline. This work contributes to the development of systems for hate speech detection that are not only better performing but also fairer and more inclusive towards targets that have been neglected so far.

+

+

+

+ 26. 【2410.08048】VerifierQ: Enhancing LLM Test Time Compute with Q-Learning-based Verifiers

+ 链接:https://arxiv.org/abs/2410.08048

+ 作者:Jianing Qi,Hao Tang,Zhigang Zhu

+ 类目:Machine Learning (cs.LG); Computation and Language (cs.CL)

+ 关键词:test time compute, Large Language Models, Large Language, Language Models, verifier models

+ 备注:

+

+ 点击查看摘要

+ Abstract:Recent advancements in test time compute, particularly through the use of verifier models, have significantly enhanced the reasoning capabilities of Large Language Models (LLMs). This generator-verifier approach closely resembles the actor-critic framework in reinforcement learning (RL). However, current verifier models in LLMs often rely on supervised fine-tuning without temporal difference learning such as Q-learning. This paper introduces VerifierQ, a novel approach that integrates Offline Q-learning into LLM verifier models. We address three key challenges in applying Q-learning to LLMs: (1) handling utterance-level Markov Decision Processes (MDPs), (2) managing large action spaces, and (3) mitigating overestimation bias. VerifierQ introduces a modified Bellman update for bounded Q-values, incorporates Implicit Q-learning (IQL) for efficient action space management, and integrates a novel Conservative Q-learning (CQL) formulation for balanced Q-value estimation. Our method enables parallel Q-value computation and improving training efficiency. While recent work has explored RL techniques like MCTS for generators, VerifierQ is among the first to investigate the verifier (critic) aspect in LLMs through Q-learning. This integration of RL principles into verifier models complements existing advancements in generator techniques, potentially enabling more robust and adaptive reasoning in LLMs. Experimental results on mathematical reasoning tasks demonstrate VerifierQ's superior performance compared to traditional supervised fine-tuning approaches, with improvements in efficiency, accuracy and robustness. By enhancing the synergy between generation and evaluation capabilities, VerifierQ contributes to the ongoing evolution of AI systems in addressing complex cognitive tasks across various domains.

+

+

+

+ 27. 【2410.08047】Divide and Translate: Compositional First-Order Logic Translation and Verification for Complex Logical Reasoning

+ 链接:https://arxiv.org/abs/2410.08047

+ 作者:Hyun Ryu,Gyeongman Kim,Hyemin S. Lee,Eunho Yang

+ 类目:Computation and Language (cs.CL)

+ 关键词:large language model, reasoning tasks require, prompting still falls, falls short, tasks require

+ 备注:

+

+ 点击查看摘要

+ Abstract:Complex logical reasoning tasks require a long sequence of reasoning, which a large language model (LLM) with chain-of-thought prompting still falls short. To alleviate this issue, neurosymbolic approaches incorporate a symbolic solver. Specifically, an LLM only translates a natural language problem into a satisfiability (SAT) problem that consists of first-order logic formulas, and a sound symbolic solver returns a mathematically correct solution. However, we discover that LLMs have difficulties to capture complex logical semantics hidden in the natural language during translation. To resolve this limitation, we propose a Compositional First-Order Logic Translation. An LLM first parses a natural language sentence into newly defined logical dependency structures that consist of an atomic subsentence and its dependents, then sequentially translate the parsed subsentences. Since multiple logical dependency structures and sequential translations are possible for a single sentence, we also introduce two Verification algorithms to ensure more reliable results. We utilize an SAT solver to rigorously compare semantics of generated first-order logic formulas and select the most probable one. We evaluate the proposed method, dubbed CLOVER, on seven logical reasoning benchmarks and show that it outperforms the previous neurosymbolic approaches and achieves new state-of-the-art results.

+

+

+

+ 28. 【2410.08044】he Rise of AI-Generated Content in Wikipedia

+ 链接:https://arxiv.org/abs/2410.08044

+ 作者:Creston Brooks,Samuel Eggert,Denis Peskoff

+ 类目:Computation and Language (cs.CL)

+ 关键词:popular information sources, information sources raises, sources raises significant, raises significant concerns, concerns about accountability

+ 备注:

+

+ 点击查看摘要

+ Abstract:The rise of AI-generated content in popular information sources raises significant concerns about accountability, accuracy, and bias amplification. Beyond directly impacting consumers, the widespread presence of this content poses questions for the long-term viability of training language models on vast internet sweeps. We use GPTZero, a proprietary AI detector, and Binoculars, an open-source alternative, to establish lower bounds on the presence of AI-generated content in recently created Wikipedia pages. Both detectors reveal a marked increase in AI-generated content in recent pages compared to those from before the release of GPT-3.5. With thresholds calibrated to achieve a 1% false positive rate on pre-GPT-3.5 articles, detectors flag over 5% of newly created English Wikipedia articles as AI-generated, with lower percentages for German, French, and Italian articles. Flagged Wikipedia articles are typically of lower quality and are often self-promotional or partial towards a specific viewpoint on controversial topics.

+

+

+

+ 29. 【2410.08037】Composite Learning Units: Generalized Learning Beyond Parameter Updates to Transform LLMs into Adaptive Reasoners

+ 链接:https://arxiv.org/abs/2410.08037

+ 作者:Santosh Kumar Radha,Oktay Goktas

+ 类目:Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Multiagent Systems (cs.MA)

+ 关键词:Human learning thrives, Large Language Models, Composite Learning Units, static machine learning, Human learning

+ 备注:

+

+ 点击查看摘要

+ Abstract:Human learning thrives on the ability to learn from mistakes, adapt through feedback, and refine understanding-processes often missing in static machine learning models. In this work, we introduce Composite Learning Units (CLUs) designed to transform reasoners, such as Large Language Models (LLMs), into learners capable of generalized, continuous learning without conventional parameter updates while enhancing their reasoning abilities through continual interaction and feedback. CLUs are built on an architecture that allows a reasoning model to maintain and evolve a dynamic knowledge repository: a General Knowledge Space for broad, reusable insights and a Prompt-Specific Knowledge Space for task-specific learning. Through goal-driven interactions, CLUs iteratively refine these knowledge spaces, enabling the system to adapt dynamically to complex tasks, extract nuanced insights, and build upon past experiences autonomously. We demonstrate CLUs' effectiveness through a cryptographic reasoning task, where they continuously evolve their understanding through feedback to uncover hidden transformation rules. While conventional models struggle to grasp underlying logic, CLUs excel by engaging in an iterative, goal-oriented process. Specialized components-handling knowledge retrieval, prompt generation, and feedback analysis-work together within a reinforcing feedback loop. This approach allows CLUs to retain the memory of past failures and successes, adapt autonomously, and apply sophisticated reasoning effectively, continually learning from mistakes while also building on breakthroughs.

+

+

+

+ 30. 【2410.08027】Private Language Models via Truncated Laplacian Mechanism

+ 链接:https://arxiv.org/abs/2410.08027

+ 作者:Tianhao Huang,Tao Yang,Ivan Habernal,Lijie Hu,Di Wang

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Machine Learning (cs.LG)

+ 关键词:Deep learning models, Deep learning, models for NLP, truncated Laplacian mechanism, NLP tasks

+ 备注: Accepted by EMNLP 2024, Main Track

+

+ 点击查看摘要

+ Abstract:Deep learning models for NLP tasks are prone to variants of privacy attacks. To prevent privacy leakage, researchers have investigated word-level perturbations, relying on the formal guarantees of differential privacy (DP) in the embedding space. However, many existing approaches either achieve unsatisfactory performance in the high privacy regime when using the Laplacian or Gaussian mechanism, or resort to weaker relaxations of DP that are inferior to the canonical DP in terms of privacy strength. This raises the question of whether a new method for private word embedding can be designed to overcome these limitations. In this paper, we propose a novel private embedding method called the high dimensional truncated Laplacian mechanism. Specifically, we introduce a non-trivial extension of the truncated Laplacian mechanism, which was previously only investigated in one-dimensional space cases. Theoretically, we show that our method has a lower variance compared to the previous private word embedding methods. To further validate its effectiveness, we conduct comprehensive experiments on private embedding and downstream tasks using three datasets. Remarkably, even in the high privacy regime, our approach only incurs a slight decrease in utility compared to the non-private scenario.

+

+

+

+ 31. 【2410.08014】LLM Cascade with Multi-Objective Optimal Consideration

+ 链接:https://arxiv.org/abs/2410.08014

+ 作者:Kai Zhang,Liqian Peng,Congchao Wang,Alec Go,Xiaozhong Liu

+ 类目:Computation and Language (cs.CL)

+ 关键词:Large Language Models, generating natural language, Large Language, demonstrated exceptional capabilities, natural language

+ 备注:

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs) have demonstrated exceptional capabilities in understanding and generating natural language. However, their high deployment costs often pose a barrier to practical applications, especially. Cascading local and server models offers a promising solution to this challenge. While existing studies on LLM cascades have primarily focused on the performance-cost trade-off, real-world scenarios often involve more complex requirements. This paper introduces a novel LLM Cascade strategy with Multi-Objective Optimization, enabling LLM cascades to consider additional objectives (e.g., privacy) and better align with the specific demands of real-world applications while maintaining their original cascading abilities. Extensive experiments on three benchmarks validate the effectiveness and superiority of our approach.

+

+

+

+ 32. 【2410.07991】Human and LLM Biases in Hate Speech Annotations: A Socio-Demographic Analysis of Annotators and Targets

+ 链接:https://arxiv.org/abs/2410.07991

+ 作者:Tommaso Giorgi,Lorenzo Cima,Tiziano Fagni,Marco Avvenuti,Stefano Cresci

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Human-Computer Interaction (cs.HC)

+ 关键词:online platforms exacerbated, hate speech detection, hate speech, speech detection systems, speech detection

+ 备注:

+

+ 点击查看摘要

+ Abstract:The rise of online platforms exacerbated the spread of hate speech, demanding scalable and effective detection. However, the accuracy of hate speech detection systems heavily relies on human-labeled data, which is inherently susceptible to biases. While previous work has examined the issue, the interplay between the characteristics of the annotator and those of the target of the hate are still unexplored. We fill this gap by leveraging an extensive dataset with rich socio-demographic information of both annotators and targets, uncovering how human biases manifest in relation to the target's attributes. Our analysis surfaces the presence of widespread biases, which we quantitatively describe and characterize based on their intensity and prevalence, revealing marked differences. Furthermore, we compare human biases with those exhibited by persona-based LLMs. Our findings indicate that while persona-based LLMs do exhibit biases, these differ significantly from those of human annotators. Overall, our work offers new and nuanced results on human biases in hate speech annotations, as well as fresh insights into the design of AI-driven hate speech detection systems.

+

+

+

+ 33. 【2410.07985】Omni-MATH: A Universal Olympiad Level Mathematic Benchmark For Large Language Models

+ 链接:https://arxiv.org/abs/2410.07985

+ 作者:Bofei Gao,Feifan Song,Zhe Yang,Zefan Cai,Yibo Miao,Qingxiu Dong,Lei Li,Chenghao Ma,Liang Chen,Runxin Xu,Zhengyang Tang,Benyou Wang,Daoguang Zan,Shanghaoran Quan,Ge Zhang,Lei Sha,Yichang Zhang,Xuancheng Ren,Tianyu Liu,Baobao Chang

+ 类目:Computation and Language (cs.CL)

+ 关键词:Recent advancements, large language models, advancements in large, large language, mathematical reasoning capabilities

+ 备注: 26 Pages, 17 Figures

+

+ 点击查看摘要

+ Abstract:Recent advancements in large language models (LLMs) have led to significant breakthroughs in mathematical reasoning capabilities. However, existing benchmarks like GSM8K or MATH are now being solved with high accuracy (e.g., OpenAI o1 achieves 94.8% on MATH dataset), indicating their inadequacy for truly challenging these models. To bridge this gap, we propose a comprehensive and challenging benchmark specifically designed to assess LLMs' mathematical reasoning at the Olympiad level. Unlike existing Olympiad-related benchmarks, our dataset focuses exclusively on mathematics and comprises a vast collection of 4428 competition-level problems with rigorous human annotation. These problems are meticulously categorized into over 33 sub-domains and span more than 10 distinct difficulty levels, enabling a holistic assessment of model performance in Olympiad-mathematical reasoning. Furthermore, we conducted an in-depth analysis based on this benchmark. Our experimental results show that even the most advanced models, OpenAI o1-mini and OpenAI o1-preview, struggle with highly challenging Olympiad-level problems, with 60.54% and 52.55% accuracy, highlighting significant challenges in Olympiad-level mathematical reasoning.

+

+

+

+ 34. 【2410.07959】COMPL-AI Framework: A Technical Interpretation and LLM Benchmarking Suite for the EU Artificial Intelligence Act

+ 链接:https://arxiv.org/abs/2410.07959

+ 作者:Philipp Guldimann,Alexander Spiridonov,Robin Staab,Nikola Jovanović,Mark Vero,Velko Vechev,Anna Gueorguieva,Mislav Balunović,Nikola Konstantinov,Pavol Bielik,Petar Tsankov,Martin Vechev

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Computers and Society (cs.CY); Machine Learning (cs.LG)

+ 关键词:Artificial Intelligence Act, assess models' compliance, Artificial Intelligence, lacks clear technical, clear technical interpretation

+ 备注:

+

+ 点击查看摘要

+ Abstract:The EU's Artificial Intelligence Act (AI Act) is a significant step towards responsible AI development, but lacks clear technical interpretation, making it difficult to assess models' compliance. This work presents COMPL-AI, a comprehensive framework consisting of (i) the first technical interpretation of the EU AI Act, translating its broad regulatory requirements into measurable technical requirements, with the focus on large language models (LLMs), and (ii) an open-source Act-centered benchmarking suite, based on thorough surveying and implementation of state-of-the-art LLM benchmarks. By evaluating 12 prominent LLMs in the context of COMPL-AI, we reveal shortcomings in existing models and benchmarks, particularly in areas like robustness, safety, diversity, and fairness. This work highlights the need for a shift in focus towards these aspects, encouraging balanced development of LLMs and more comprehensive regulation-aligned benchmarks. Simultaneously, COMPL-AI for the first time demonstrates the possibilities and difficulties of bringing the Act's obligations to a more concrete, technical level. As such, our work can serve as a useful first step towards having actionable recommendations for model providers, and contributes to ongoing efforts of the EU to enable application of the Act, such as the drafting of the GPAI Code of Practice.

+

+

+

+ 35. 【2410.07951】Disease Entity Recognition and Normalization is Improved with Large Language Model Derived Synthetic Normalized Mentions

+ 链接:https://arxiv.org/abs/2410.07951

+ 作者:Kuleen Sasse,Shinjitha Vadlakonda,Richard E. Kennedy,John D. Osborne

+ 类目:Computation and Language (cs.CL); Machine Learning (cs.LG)

+ 关键词:Knowledge Graphs, clinical named entity, named entity recognition, Disease Entity Recognition, entity recognition

+ 备注: 21 pages, 3 figures, 7 tables

+

+ 点击查看摘要

+ Abstract:Background: Machine learning methods for clinical named entity recognition and entity normalization systems can utilize both labeled corpora and Knowledge Graphs (KGs) for learning. However, infrequently occurring concepts may have few mentions in training corpora and lack detailed descriptions or synonyms, even in large KGs. For Disease Entity Recognition (DER) and Disease Entity Normalization (DEN), this can result in fewer high quality training examples relative to the number of known diseases. Large Language Model (LLM) generation of synthetic training examples could improve performance in these information extraction tasks.

+Methods: We fine-tuned a LLaMa-2 13B Chat LLM to generate a synthetic corpus containing normalized mentions of concepts from the Unified Medical Language System (UMLS) Disease Semantic Group. We measured overall and Out of Distribution (OOD) performance for DER and DEN, with and without synthetic data augmentation. We evaluated performance on 3 different disease corpora using 4 different data augmentation strategies, assessed using BioBERT for DER and SapBERT and KrissBERT for DEN.

+Results: Our synthetic data yielded a substantial improvement for DEN, in all 3 training corpora the top 1 accuracy of both SapBERT and KrissBERT improved by 3-9 points in overall performance and by 20-55 points in OOD data. A small improvement (1-2 points) was also seen for DER in overall performance, but only one dataset showed OOD improvement.

+Conclusion: LLM generation of normalized disease mentions can improve DEN relative to normalization approaches that do not utilize LLMs to augment data with synthetic mentions. Ablation studies indicate that performance gains for DEN were only partially attributable to improvements in OOD performance. The same approach has only a limited ability to improve DER. We make our software and dataset publicly available.

+

Comments:

+21 pages, 3 figures, 7 tables

+Subjects:

+Computation and Language (cs.CL); Machine Learning (cs.LG)

+ACMclasses:

+I.2.7; J.3

+Cite as:

+arXiv:2410.07951 [cs.CL]

+(or

+arXiv:2410.07951v1 [cs.CL] for this version)

+https://doi.org/10.48550/arXiv.2410.07951

+Focus to learn more

+ arXiv-issued DOI via DataCite (pending registration)

+

+Submission history From: John Osborne [view email] [v1]

+Thu, 10 Oct 2024 14:18:34 UTC (1,574 KB)

+

+

+

+ 36. 【2410.07919】InstructBioMol: Advancing Biomolecule Understanding and Design Following Human Instructions

+ 链接:https://arxiv.org/abs/2410.07919

+ 作者:Xiang Zhuang,Keyan Ding,Tianwen Lyu,Yinuo Jiang,Xiaotong Li,Zhuoyi Xiang,Zeyuan Wang,Ming Qin,Kehua Feng,Jike Wang,Qiang Zhang,Huajun Chen

+ 类目:Computation and Language (cs.CL); Biomolecules (q-bio.BM)

+ 关键词:Understanding and designing, advancing drug discovery, natural language, synthetic biology, central to advancing

+ 备注:

+

+ 点击查看摘要

+ Abstract:Understanding and designing biomolecules, such as proteins and small molecules, is central to advancing drug discovery, synthetic biology, and enzyme engineering. Recent breakthroughs in Artificial Intelligence (AI) have revolutionized biomolecular research, achieving remarkable accuracy in biomolecular prediction and design. However, a critical gap remains between AI's computational power and researchers' intuition, using natural language to align molecular complexity with human intentions. Large Language Models (LLMs) have shown potential to interpret human intentions, yet their application to biomolecular research remains nascent due to challenges including specialized knowledge requirements, multimodal data integration, and semantic alignment between natural language and biomolecules. To address these limitations, we present InstructBioMol, a novel LLM designed to bridge natural language and biomolecules through a comprehensive any-to-any alignment of natural language, molecules, and proteins. This model can integrate multimodal biomolecules as input, and enable researchers to articulate design goals in natural language, providing biomolecular outputs that meet precise biological needs. Experimental results demonstrate InstructBioMol can understand and design biomolecules following human instructions. Notably, it can generate drug molecules with a 10% improvement in binding affinity and design enzymes that achieve an ESP Score of 70.4, making it the only method to surpass the enzyme-substrate interaction threshold of 60.0 recommended by the ESP developer. This highlights its potential to transform real-world biomolecular research.

+

+

+

+ 37. 【2410.07880】Unsupervised Data Validation Methods for Efficient Model Training

+ 链接:https://arxiv.org/abs/2410.07880

+ 作者:Yurii Paniv

+ 类目:Computation and Language (cs.CL); Machine Learning (cs.LG)

+ 关键词:low-resource languages, potential solutions, solutions for improving, systems for low-resource, machine learning systems

+ 备注:

+

+ 点击查看摘要

+ Abstract:This paper investigates the challenges and potential solutions for improving machine learning systems for low-resource languages. State-of-the-art models in natural language processing (NLP), text-to-speech (TTS), speech-to-text (STT), and vision-language models (VLM) rely heavily on large datasets, which are often unavailable for low-resource languages. This research explores key areas such as defining "quality data," developing methods for generating appropriate data and enhancing accessibility to model training. A comprehensive review of current methodologies, including data augmentation, multilingual transfer learning, synthetic data generation, and data selection techniques, highlights both advancements and limitations. Several open research questions are identified, providing a framework for future studies aimed at optimizing data utilization, reducing the required data quantity, and maintaining high-quality model performance. By addressing these challenges, the paper aims to make advanced machine learning models more accessible for low-resource languages, enhancing their utility and impact across various sectors.

+

+

+

+ 38. 【2410.07869】Benchmarking Agentic Workflow Generation

+ 链接:https://arxiv.org/abs/2410.07869

+ 作者:Shuofei Qiao,Runnan Fang,Zhisong Qiu,Xiaobin Wang,Ningyu Zhang,Yong Jiang,Pengjun Xie,Fei Huang,Huajun Chen

+ 类目:Computation and Language (cs.CL); Artificial Intelligence (cs.AI); Human-Computer Interaction (cs.HC); Machine Learning (cs.LG); Multiagent Systems (cs.MA)

+ 关键词:Large Language Models, Large Language, driven significant advancements, decomposing complex problems, Language Models

+ 备注: Work in progress

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs), with their exceptional ability to handle a wide range of tasks, have driven significant advancements in tackling reasoning and planning tasks, wherein decomposing complex problems into executable workflows is a crucial step in this process. Existing workflow evaluation frameworks either focus solely on holistic performance or suffer from limitations such as restricted scenario coverage, simplistic workflow structures, and lax evaluation standards. To this end, we introduce WorFBench, a unified workflow generation benchmark with multi-faceted scenarios and intricate graph workflow structures. Additionally, we present WorFEval, a systemic evaluation protocol utilizing subsequence and subgraph matching algorithms to accurately quantify the LLM agent's workflow generation capabilities. Through comprehensive evaluations across different types of LLMs, we discover distinct gaps between the sequence planning capabilities and graph planning capabilities of LLM agents, with even GPT-4 exhibiting a gap of around 15%. We also train two open-source models and evaluate their generalization abilities on held-out tasks. Furthermore, we observe that the generated workflows can enhance downstream tasks, enabling them to achieve superior performance with less time during inference. Code and dataset will be available at this https URL.

+

+

+

+ 39. 【2410.07839】Enhancing Language Model Reasoning via Weighted Reasoning in Self-Consistency

+ 链接:https://arxiv.org/abs/2410.07839

+ 作者:Tim Knappe,Ryan Li,Ayush Chauhan,Kaylee Chhua,Kevin Zhu,Sean O'Brien

+ 类目:Computation and Language (cs.CL)

+ 关键词:large language models, reasoning tasks, tasks, large language, rapidly improved

+ 备注: Accepted to MATH-AI at NeurIPS 2024

+

+ 点击查看摘要

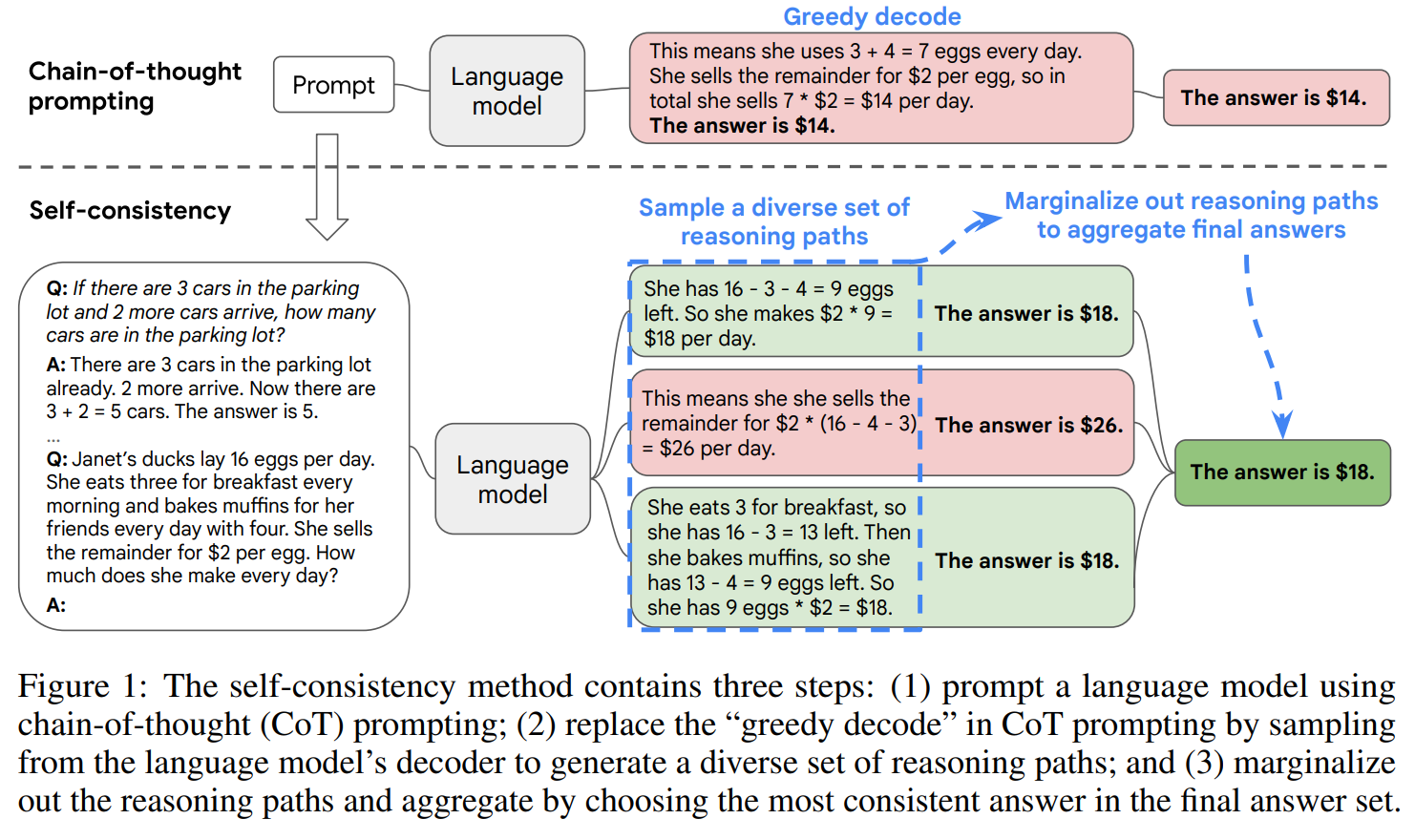

+ Abstract:While large language models (LLMs) have rapidly improved their performance on a broad number of tasks, they still often fall short on reasoning tasks. As LLMs become more integrated in diverse real-world tasks, advancing their reasoning capabilities is crucial to their effectiveness in nuanced, complex problems. Wang et al's self-consistency framework reveals that sampling multiple rationales before taking a majority vote reliably improves model performance across various closed-answer reasoning tasks. Standard methods based on this framework aggregate the final decisions of these rationales but fail to utilize the detailed step-by-step reasoning paths applied by these paths. Our work enhances this approach by incorporating and analyzing both the reasoning paths of these rationales in addition to their final decisions before taking a majority vote. These methods not only improve the reliability of reasoning paths but also cause more robust performance on complex reasoning tasks.

+

+

+

+ 40. 【2410.07830】NusaMT-7B: Machine Translation for Low-Resource Indonesian Languages with Large Language Models

+ 链接:https://arxiv.org/abs/2410.07830

+ 作者:William Tan,Kevin Zhu

+ 类目:Computation and Language (cs.CL)

+ 关键词:demonstrated exceptional promise, Large Language Models, Large Language, Balinese and Minangkabau, demonstrated exceptional

+ 备注: Accepted to SoLaR @ NeurIPS 2024

+

+ 点击查看摘要

+ Abstract:Large Language Models (LLMs) have demonstrated exceptional promise in translation tasks for high-resource languages. However, their performance in low-resource languages is limited by the scarcity of both parallel and monolingual corpora, as well as the presence of noise. Consequently, such LLMs suffer with alignment and have lagged behind State-of-The-Art (SoTA) neural machine translation (NMT) models in these settings. This paper introduces NusaMT-7B, an LLM-based machine translation model for low-resource Indonesian languages, starting with Balinese and Minangkabau. Leveraging the pretrained LLaMA2-7B, our approach integrates continued pre-training on monolingual data, Supervised Fine-Tuning (SFT), self-learning, and an LLM-based data cleaner to reduce noise in parallel sentences. In the FLORES-200 multilingual translation benchmark, NusaMT-7B outperforms SoTA models in the spBLEU metric by up to +6.69 spBLEU in translations into Balinese and Minangkabau, but underperforms by up to -3.38 spBLEU in translations into higher-resource languages. Our results show that fine-tuned LLMs can enhance translation quality for low-resource languages, aiding in linguistic preservation and cross-cultural communication.

+

+

+

+ 41. 【2410.07827】Why do objects have many names? A study on word informativeness in language use and lexical systems

+ 链接:https://arxiv.org/abs/2410.07827

+ 作者:Eleonora Gualdoni,Gemma Boleda

+ 类目:Computation and Language (cs.CL)

+ 关键词:Human lexicons, lexical systems, Human, lexical, systems

+ 备注: Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing (EMNLP 2024)

+

+ 点击查看摘要

+ Abstract:Human lexicons contain many different words that speakers can use to refer to the same object, e.g., "purple" or "magenta" for the same shade of color. On the one hand, studies on language use have explored how speakers adapt their referring expressions to successfully communicate in context, without focusing on properties of the lexical system. On the other hand, studies in language evolution have discussed how competing pressures for informativeness and simplicity shape lexical systems, without tackling in-context communication. We aim at bridging the gap between these traditions, and explore why a soft mapping between referents and words is a good solution for communication, by taking into account both in-context communication and the structure of the lexicon. We propose a simple measure of informativeness for words and lexical systems, grounded in a visual space, and analyze color naming data for English and Mandarin Chinese. We conclude that optimal lexical systems are those where multiple words can apply to the same referent, conveying different amounts of information. Such systems allow speakers to maximize communication accuracy and minimize the amount of information they convey when communicating about referents in contexts.

+

+

+

+ 42. 【2410.07826】Fine-Tuning Language Models for Ethical Ambiguity: A Comparative Study of Alignment with Human Responses

+ 链接:https://arxiv.org/abs/2410.07826

+ 作者:Pranav Senthilkumar,Visshwa Balasubramanian,Prisha Jain,Aneesa Maity,Jonathan Lu,Kevin Zhu

+ 类目:Computation and Language (cs.CL)

+ 关键词:well-recognized in NLP, misinterpret human intentions, human intentions due, Language models, handling of ambiguity

+ 备注: Accepted to NeurIPS 2024, SoLaR workshop

+

+ 点击查看摘要