本篇博文主要展示每日从Arxiv论文网站获取的最新论文列表,以计算机视觉、自然语言处理、机器学习、人工智能等大方向进行划分。

+统计

+今日共更新326篇论文,其中:

+

+计算机视觉

+

+ 1. 标题:A Large-scale Dataset for Audio-Language Representation Learning

+ 编号:[1]

+ 链接:https://arxiv.org/abs/2309.11500

+ 作者:Luoyi Sun, Xuenan Xu, Mengyue Wu, Weidi Xie

+ 备注:

+ 关键词:made significant strides, developing powerful foundation, powerful foundation models, made significant, significant strides

+

+ 点击查看摘要

+ The AI community has made significant strides in developing powerful foundation models, driven by large-scale multimodal datasets. However, in the audio representation learning community, the present audio-language datasets suffer from limitations such as insufficient volume, simplistic content, and arduous collection procedures. To tackle these challenges, we present an innovative and automatic audio caption generation pipeline based on a series of public tools or APIs, and construct a large-scale, high-quality, audio-language dataset, named as Auto-ACD, comprising over 1.9M audio-text pairs. To demonstrate the effectiveness of the proposed dataset, we train popular models on our dataset and show performance improvement on various downstream tasks, namely, audio-language retrieval, audio captioning, environment classification. In addition, we establish a novel test set and provide a benchmark for audio-text tasks. The proposed dataset will be released at this https URL.

+

+

+

+ 2. 标题:DreamLLM: Synergistic Multimodal Comprehension and Creation

+ 编号:[2]

+ 链接:https://arxiv.org/abs/2309.11499

+ 作者:Runpei Dong, Chunrui Han, Yuang Peng, Zekun Qi, Zheng Ge, Jinrong Yang, Liang Zhao, Jianjian Sun, Hongyu Zhou, Haoran Wei, Xiangwen Kong, Xiangyu Zhang, Kaisheng Ma, Li Yi

+ 备注:see project page at this https URL

+ 关键词:Large Language Models, versatile Multimodal Large, Multimodal Large Language, Language Models, Large Language

+

+ 点击查看摘要

+ This paper presents DreamLLM, a learning framework that first achieves versatile Multimodal Large Language Models (MLLMs) empowered with frequently overlooked synergy between multimodal comprehension and creation. DreamLLM operates on two fundamental principles. The first focuses on the generative modeling of both language and image posteriors by direct sampling in the raw multimodal space. This approach circumvents the limitations and information loss inherent to external feature extractors like CLIP, and a more thorough multimodal understanding is obtained. Second, DreamLLM fosters the generation of raw, interleaved documents, modeling both text and image contents, along with unstructured layouts. This allows DreamLLM to learn all conditional, marginal, and joint multimodal distributions effectively. As a result, DreamLLM is the first MLLM capable of generating free-form interleaved content. Comprehensive experiments highlight DreamLLM's superior performance as a zero-shot multimodal generalist, reaping from the enhanced learning synergy.

+

+

+

+ 3. 标题:FreeU: Free Lunch in Diffusion U-Net

+ 编号:[3]

+ 链接:https://arxiv.org/abs/2309.11497

+ 作者:Chenyang Si, Ziqi Huang, Yuming Jiang, Ziwei Liu

+ 备注:Project page: this https URL

+ 关键词:free lunch, uncover the untapped, untapped potential, generation quality, U-Net skip connections

+

+ 点击查看摘要

+ In this paper, we uncover the untapped potential of diffusion U-Net, which serves as a "free lunch" that substantially improves the generation quality on the fly. We initially investigate the key contributions of the U-Net architecture to the denoising process and identify that its main backbone primarily contributes to denoising, whereas its skip connections mainly introduce high-frequency features into the decoder module, causing the network to overlook the backbone semantics. Capitalizing on this discovery, we propose a simple yet effective method-termed "FreeU" - that enhances generation quality without additional training or finetuning. Our key insight is to strategically re-weight the contributions sourced from the U-Net's skip connections and backbone feature maps, to leverage the strengths of both components of the U-Net architecture. Promising results on image and video generation tasks demonstrate that our FreeU can be readily integrated to existing diffusion models, e.g., Stable Diffusion, DreamBooth, ModelScope, Rerender and ReVersion, to improve the generation quality with only a few lines of code. All you need is to adjust two scaling factors during inference. Project page: https://chenyangsi.top/FreeU/.

+

+

+

+ 4. 标题:Budget-Aware Pruning: Handling Multiple Domains with Less Parameters

+ 编号:[15]

+ 链接:https://arxiv.org/abs/2309.11464

+ 作者:Samuel Felipe dos Santos, Rodrigo Berriel, Thiago Oliveira-Santos, Nicu Sebe, Jurandy Almeida

+ 备注:arXiv admin note: substantial text overlap with arXiv:2210.08101

+ 关键词:computer vision tasks, computer vision, single domain, single, domains

+

+ 点击查看摘要

+ Deep learning has achieved state-of-the-art performance on several computer vision tasks and domains. Nevertheless, it still has a high computational cost and demands a significant amount of parameters. Such requirements hinder the use in resource-limited environments and demand both software and hardware optimization. Another limitation is that deep models are usually specialized into a single domain or task, requiring them to learn and store new parameters for each new one. Multi-Domain Learning (MDL) attempts to solve this problem by learning a single model that is capable of performing well in multiple domains. Nevertheless, the models are usually larger than the baseline for a single domain. This work tackles both of these problems: our objective is to prune models capable of handling multiple domains according to a user-defined budget, making them more computationally affordable while keeping a similar classification performance. We achieve this by encouraging all domains to use a similar subset of filters from the baseline model, up to the amount defined by the user's budget. Then, filters that are not used by any domain are pruned from the network. The proposed approach innovates by better adapting to resource-limited devices while, to our knowledge, being the only work that handles multiple domains at test time with fewer parameters and lower computational complexity than the baseline model for a single domain.

+

+

+

+ 5. 标题:Weight Averaging Improves Knowledge Distillation under Domain Shift

+ 编号:[22]

+ 链接:https://arxiv.org/abs/2309.11446

+ 作者:Valeriy Berezovskiy, Nikita Morozov

+ 备注:ICCV 2023 Workshop on Out-of-Distribution Generalization in Computer Vision (OOD-CV)

+ 关键词:deep learning applications, powerful model compression, practical deep learning, model compression technique, compression technique broadly

+

+ 点击查看摘要

+ Knowledge distillation (KD) is a powerful model compression technique broadly used in practical deep learning applications. It is focused on training a small student network to mimic a larger teacher network. While it is widely known that KD can offer an improvement to student generalization in i.i.d setting, its performance under domain shift, i.e. the performance of student networks on data from domains unseen during training, has received little attention in the literature. In this paper we make a step towards bridging the research fields of knowledge distillation and domain generalization. We show that weight averaging techniques proposed in domain generalization literature, such as SWAD and SMA, also improve the performance of knowledge distillation under domain shift. In addition, we propose a simplistic weight averaging strategy that does not require evaluation on validation data during training and show that it performs on par with SWAD and SMA when applied to KD. We name our final distillation approach Weight-Averaged Knowledge Distillation (WAKD).

+

+

+

+ 6. 标题:SkeleTR: Towrads Skeleton-based Action Recognition in the Wild

+ 编号:[23]

+ 链接:https://arxiv.org/abs/2309.11445

+ 作者:Haodong Duan, Mingze Xu, Bing Shuai, Davide Modolo, Zhuowen Tu, Joseph Tighe, Alessandro Bergamo

+ 备注:ICCV 2023

+ 关键词:action, SkeleTR, action recognition, skeleton-based action, recognition

+

+ 点击查看摘要

+ We present SkeleTR, a new framework for skeleton-based action recognition. In contrast to prior work, which focuses mainly on controlled environments, we target more general scenarios that typically involve a variable number of people and various forms of interaction between people. SkeleTR works with a two-stage paradigm. It first models the intra-person skeleton dynamics for each skeleton sequence with graph convolutions, and then uses stacked Transformer encoders to capture person interactions that are important for action recognition in general scenarios. To mitigate the negative impact of inaccurate skeleton associations, SkeleTR takes relative short skeleton sequences as input and increases the number of sequences. As a unified solution, SkeleTR can be directly applied to multiple skeleton-based action tasks, including video-level action classification, instance-level action detection, and group-level activity recognition. It also enables transfer learning and joint training across different action tasks and datasets, which result in performance improvement. When evaluated on various skeleton-based action recognition benchmarks, SkeleTR achieves the state-of-the-art performance.

+

+

+

+ 7. 标题:Signature Activation: A Sparse Signal View for Holistic Saliency

+ 编号:[24]

+ 链接:https://arxiv.org/abs/2309.11443

+ 作者:Jose Roberto Tello Ayala, Akl C. Fahed, Weiwei Pan, Eugene V. Pomerantsev, Patrick T. Ellinor, Anthony Philippakis, Finale Doshi-Velez

+ 备注:

+ 关键词:Convolutional Neural Network, introduce Signature Activation, transparency and explainability, adoption of machine, machine learning

+

+ 点击查看摘要

+ The adoption of machine learning in healthcare calls for model transparency and explainability. In this work, we introduce Signature Activation, a saliency method that generates holistic and class-agnostic explanations for Convolutional Neural Network (CNN) outputs. Our method exploits the fact that certain kinds of medical images, such as angiograms, have clear foreground and background objects. We give theoretical explanation to justify our methods. We show the potential use of our method in clinical settings through evaluating its efficacy for aiding the detection of lesions in coronary angiograms.

+

+

+

+ 8. 标题:A Systematic Review of Few-Shot Learning in Medical Imaging

+ 编号:[27]

+ 链接:https://arxiv.org/abs/2309.11433

+ 作者:Eva Pachetti, Sara Colantonio

+ 备注:48 pages, 29 figures, 10 tables, submitted to Elsevier on 19 Sep 2023

+ 关键词:deep learning models, large-scale labelled datasets, Few-shot learning, annotated medical images, medical images limits

+

+ 点击查看摘要

+ The lack of annotated medical images limits the performance of deep learning models, which usually need large-scale labelled datasets. Few-shot learning techniques can reduce data scarcity issues and enhance medical image analysis, especially with meta-learning. This systematic review gives a comprehensive overview of few-shot learning in medical imaging. We searched the literature systematically and selected 80 relevant articles published from 2018 to 2023. We clustered the articles based on medical outcomes, such as tumour segmentation, disease classification, and image registration; anatomical structure investigated (i.e. heart, lung, etc.); and the meta-learning method used. For each cluster, we examined the papers' distributions and the results provided by the state-of-the-art. In addition, we identified a generic pipeline shared among all the studies. The review shows that few-shot learning can overcome data scarcity in most outcomes and that meta-learning is a popular choice to perform few-shot learning because it can adapt to new tasks with few labelled samples. In addition, following meta-learning, supervised learning and semi-supervised learning stand out as the predominant techniques employed to tackle few-shot learning challenges in medical imaging and also best performing. Lastly, we observed that the primary application areas predominantly encompass cardiac, pulmonary, and abdominal domains. This systematic review aims to inspire further research to improve medical image analysis and patient care.

+

+

+

+ 9. 标题:Kosmos-2.5: A Multimodal Literate Model

+ 编号:[30]

+ 链接:https://arxiv.org/abs/2309.11419

+ 作者:Tengchao Lv, Yupan Huang, Jingye Chen, Lei Cui, Shuming Ma, Yaoyao Chang, Shaohan Huang, Wenhui Wang, Li Dong, Weiyao Luo, Shaoxiang Wu, Guoxin Wang, Cha Zhang, Furu Wei

+ 备注:

+ 关键词:machine reading, text-intensive images, text, large-scale text-intensive images, multimodal literate

+

+ 点击查看摘要

+ We present Kosmos-2.5, a multimodal literate model for machine reading of text-intensive images. Pre-trained on large-scale text-intensive images, Kosmos-2.5 excels in two distinct yet cooperative transcription tasks: (1) generating spatially-aware text blocks, where each block of text is assigned its spatial coordinates within the image, and (2) producing structured text output that captures styles and structures into the markdown format. This unified multimodal literate capability is achieved through a shared Transformer architecture, task-specific prompts, and flexible text representations. We evaluate Kosmos-2.5 on end-to-end document-level text recognition and image-to-markdown text generation. Furthermore, the model can be readily adapted for any text-intensive image understanding task with different prompts through supervised fine-tuning, making it a general-purpose tool for real-world applications involving text-rich images. This work also paves the way for the future scaling of multimodal large language models.

+

+

+

+ 10. 标题:CNNs for JPEGs: A Study in Computational Cost

+ 编号:[31]

+ 链接:https://arxiv.org/abs/2309.11417

+ 作者:Samuel Felipe dos Santos, Nicu Sebe, Jurandy Almeida

+ 备注:

+ 关键词:computer vision tasks, achieved astonishing advances, Convolutional neural networks, Convolutional neural, past decade

+

+ 点击查看摘要

+ Convolutional neural networks (CNNs) have achieved astonishing advances over the past decade, defining state-of-the-art in several computer vision tasks. CNNs are capable of learning robust representations of the data directly from the RGB pixels. However, most image data are usually available in compressed format, from which the JPEG is the most widely used due to transmission and storage purposes demanding a preliminary decoding process that have a high computational load and memory usage. For this reason, deep learning methods capable of learning directly from the compressed domain have been gaining attention in recent years. Those methods usually extract a frequency domain representation of the image, like DCT, by a partial decoding, and then make adaptation to typical CNNs architectures to work with them. One limitation of these current works is that, in order to accommodate the frequency domain data, the modifications made to the original model increase significantly their amount of parameters and computational complexity. On one hand, the methods have faster preprocessing, since the cost of fully decoding the images is avoided, but on the other hand, the cost of passing the images though the model is increased, mitigating the possible upside of accelerating the method. In this paper, we propose a further study of the computational cost of deep models designed for the frequency domain, evaluating the cost of decoding and passing the images through the network. We also propose handcrafted and data-driven techniques for reducing the computational complexity and the number of parameters for these models in order to keep them similar to their RGB baselines, leading to efficient models with a better trade off between computational cost and accuracy.

+

+

+

+ 11. 标题:Enhancing motion trajectory segmentation of rigid bodies using a novel screw-based trajectory-shape representation

+ 编号:[33]

+ 链接:https://arxiv.org/abs/2309.11413

+ 作者:Arno Verduyn, Maxim Vochten, Joris De Schutter

+ 备注:This work has been submitted to the IEEE International Conference on Robotics and Automation (ICRA) for possible publication. Copyright may be transferred without notice, after which this version may no longer be accessible

+ 关键词:meaningful consecutive sub-trajectories, refers to dividing, Trajectory, segmentation, Trajectory segmentation refers

+

+ 点击查看摘要

+ Trajectory segmentation refers to dividing a trajectory into meaningful consecutive sub-trajectories. This paper focuses on trajectory segmentation for 3D rigid-body motions. Most segmentation approaches in the literature represent the body's trajectory as a point trajectory, considering only its translation and neglecting its rotation. We propose a novel trajectory representation for rigid-body motions that incorporates both translation and rotation, and additionally exhibits several invariant properties. This representation consists of a geometric progress rate and a third-order trajectory-shape descriptor. Concepts from screw theory were used to make this representation time-invariant and also invariant to the choice of body reference point. This new representation is validated for a self-supervised segmentation approach, both in simulation and using real recordings of human-demonstrated pouring motions. The results show a more robust detection of consecutive submotions with distinct features and a more consistent segmentation compared to conventional representations. We believe that other existing segmentation methods may benefit from using this trajectory representation to improve their invariance.

+

+

+

+ 12. 标题:Discuss Before Moving: Visual Language Navigation via Multi-expert Discussions

+ 编号:[42]

+ 链接:https://arxiv.org/abs/2309.11382

+ 作者:Yuxing Long, Xiaoqi Li, Wenzhe Cai, Hao Dong

+ 备注:Submitted to ICRA 2024

+ 关键词:skills encompassing understanding, embodied task demanding, demanding a wide, wide range, range of skills

+

+ 点击查看摘要

+ Visual language navigation (VLN) is an embodied task demanding a wide range of skills encompassing understanding, perception, and planning. For such a multifaceted challenge, previous VLN methods totally rely on one model's own thinking to make predictions within one round. However, existing models, even the most advanced large language model GPT4, still struggle with dealing with multiple tasks by single-round self-thinking. In this work, drawing inspiration from the expert consultation meeting, we introduce a novel zero-shot VLN framework. Within this framework, large models possessing distinct abilities are served as domain experts. Our proposed navigation agent, namely DiscussNav, can actively discuss with these experts to collect essential information before moving at every step. These discussions cover critical navigation subtasks like instruction understanding, environment perception, and completion estimation. Through comprehensive experiments, we demonstrate that discussions with domain experts can effectively facilitate navigation by perceiving instruction-relevant information, correcting inadvertent errors, and sifting through in-consistent movement decisions. The performances on the representative VLN task R2R show that our method surpasses the leading zero-shot VLN model by a large margin on all metrics. Additionally, real-robot experiments display the obvious advantages of our method over single-round self-thinking.

+

+

+

+ 13. 标题:3D Face Reconstruction: the Road to Forensics

+ 编号:[52]

+ 链接:https://arxiv.org/abs/2309.11357

+ 作者:Simone Maurizio La Cava, Giulia Orrù, Martin Drahansky, Gian Luca Marcialis, Fabio Roli

+ 备注:The manuscript has been accepted for publication in ACM Computing Surveys. arXiv admin note: text overlap with arXiv:2303.11164

+ 关键词:face reconstruction algorithms, face reconstruction, entertainment sector, advantageous features, plastic surgery

+

+ 点击查看摘要

+ 3D face reconstruction algorithms from images and videos are applied to many fields, from plastic surgery to the entertainment sector, thanks to their advantageous features. However, when looking at forensic applications, 3D face reconstruction must observe strict requirements that still make its possible role in bringing evidence to a lawsuit unclear. An extensive investigation of the constraints, potential, and limits of its application in forensics is still missing. Shedding some light on this matter is the goal of the present survey, which starts by clarifying the relation between forensic applications and biometrics, with a focus on face recognition. Therefore, it provides an analysis of the achievements of 3D face reconstruction algorithms from surveillance videos and mugshot images and discusses the current obstacles that separate 3D face reconstruction from an active role in forensic applications. Finally, it examines the underlying data sets, with their advantages and limitations, while proposing alternatives that could substitute or complement them.

+

+

+

+ 14. 标题:Self-supervised learning unveils change in urban housing from street-level images

+ 编号:[54]

+ 链接:https://arxiv.org/abs/2309.11354

+ 作者:Steven Stalder, Michele Volpi, Nicolas Büttner, Stephen Law, Kenneth Harttgen, Esra Suel

+ 备注:16 pages, 5 figures

+ 关键词:world face, shortage of affordable, affordable and decent, critical shortage, decent housing

+

+ 点击查看摘要

+ Cities around the world face a critical shortage of affordable and decent housing. Despite its critical importance for policy, our ability to effectively monitor and track progress in urban housing is limited. Deep learning-based computer vision methods applied to street-level images have been successful in the measurement of socioeconomic and environmental inequalities but did not fully utilize temporal images to track urban change as time-varying labels are often unavailable. We used self-supervised methods to measure change in London using 15 million street images taken between 2008 and 2021. Our novel adaptation of Barlow Twins, Street2Vec, embeds urban structure while being invariant to seasonal and daily changes without manual annotations. It outperformed generic embeddings, successfully identified point-level change in London's housing supply from street-level images, and distinguished between major and minor change. This capability can provide timely information for urban planning and policy decisions toward more liveable, equitable, and sustainable cities.

+

+

+

+ 15. 标题:You can have your ensemble and run it too -- Deep Ensembles Spread Over Time

+ 编号:[63]

+ 链接:https://arxiv.org/abs/2309.11333

+ 作者:Isak Meding, Alexander Bodin, Adam Tonderski, Joakim Johnander, Christoffer Petersson, Lennart Svensson

+ 备注:

+ 关键词:rival Bayesian networks, neural networks yield, Bayesian networks, rival Bayesian, deep neural networks

+

+ 点击查看摘要

+ Ensembles of independently trained deep neural networks yield uncertainty estimates that rival Bayesian networks in performance. They also offer sizable improvements in terms of predictive performance over single models. However, deep ensembles are not commonly used in environments with limited computational budget -- such as autonomous driving -- since the complexity grows linearly with the number of ensemble members. An important observation that can be made for robotics applications, such as autonomous driving, is that data is typically sequential. For instance, when an object is to be recognized, an autonomous vehicle typically observes a sequence of images, rather than a single image. This raises the question, could the deep ensemble be spread over time?

+In this work, we propose and analyze Deep Ensembles Spread Over Time (DESOT). The idea is to apply only a single ensemble member to each data point in the sequence, and fuse the predictions over a sequence of data points. We implement and experiment with DESOT for traffic sign classification, where sequences of tracked image patches are to be classified. We find that DESOT obtains the benefits of deep ensembles, in terms of predictive and uncertainty estimation performance, while avoiding the added computational cost. Moreover, DESOT is simple to implement and does not require sequences during training. Finally, we find that DESOT, like deep ensembles, outperform single models for out-of-distribution detection.

+

+

+

+ 16. 标题:Gold-YOLO: Efficient Object Detector via Gather-and-Distribute Mechanism

+ 编号:[65]

+ 链接:https://arxiv.org/abs/2309.11331

+ 作者:Chengcheng Wang, Wei He, Ying Nie, Jianyuan Guo, Chuanjian Liu, Kai Han, Yunhe Wang

+ 备注:

+ 关键词:real-time object detection, Path Aggregation Network, Feature Pyramid Network, past years, object detection

+

+ 点击查看摘要

+ In the past years, YOLO-series models have emerged as the leading approaches in the area of real-time object detection. Many studies pushed up the baseline to a higher level by modifying the architecture, augmenting data and designing new losses. However, we find previous models still suffer from information fusion problem, although Feature Pyramid Network (FPN) and Path Aggregation Network (PANet) have alleviated this. Therefore, this study provides an advanced Gatherand-Distribute mechanism (GD) mechanism, which is realized with convolution and self-attention operations. This new designed model named as Gold-YOLO, which boosts the multi-scale feature fusion capabilities and achieves an ideal balance between latency and accuracy across all model scales. Additionally, we implement MAE-style pretraining in the YOLO-series for the first time, allowing YOLOseries models could be to benefit from unsupervised pretraining. Gold-YOLO-N attains an outstanding 39.9% AP on the COCO val2017 datasets and 1030 FPS on a T4 GPU, which outperforms the previous SOTA model YOLOv6-3.0-N with similar FPS by +2.4%. The PyTorch code is available at this https URL, and the MindSpore code is available at this https URL.

+

+

+

+ 17. 标题:How to turn your camera into a perfect pinhole model

+ 编号:[66]

+ 链接:https://arxiv.org/abs/2309.11326

+ 作者:Ivan De Boi, Stuti Pathak, Marina Oliveira, Rudi Penne

+ 备注:15 pages, 3 figures, conference CIARP

+ 关键词:computer vision applications, computer vision, Gaussian processes, method, Camera

+

+ 点击查看摘要

+ Camera calibration is a first and fundamental step in various computer vision applications. Despite being an active field of research, Zhang's method remains widely used for camera calibration due to its implementation in popular toolboxes. However, this method initially assumes a pinhole model with oversimplified distortion models. In this work, we propose a novel approach that involves a pre-processing step to remove distortions from images by means of Gaussian processes. Our method does not need to assume any distortion model and can be applied to severely warped images, even in the case of multiple distortion sources, e.g., a fisheye image of a curved mirror reflection. The Gaussian processes capture all distortions and camera imperfections, resulting in virtual images as though taken by an ideal pinhole camera with square pixels. Furthermore, this ideal GP-camera only needs one image of a square grid calibration pattern. This model allows for a serious upgrade of many algorithms and applications that are designed in a pure projective geometry setting but with a performance that is very sensitive to nonlinear lens distortions. We demonstrate the effectiveness of our method by simplifying Zhang's calibration method, reducing the number of parameters and getting rid of the distortion parameters and iterative optimization. We validate by means of synthetic data and real world images. The contributions of this work include the construction of a virtual ideal pinhole camera using Gaussian processes, a simplified calibration method and lens distortion removal.

+

+

+

+ 18. 标题:Face Aging via Diffusion-based Editing

+ 编号:[69]

+ 链接:https://arxiv.org/abs/2309.11321

+ 作者:Xiangyi Chen, Stéphane Lathuilière

+ 备注:accepted at BMVC 2023

+ 关键词:future facial images, generating past, past or future, incorporating age-related, future facial

+

+ 点击查看摘要

+ In this paper, we address the problem of face aging: generating past or future facial images by incorporating age-related changes to the given face. Previous aging methods rely solely on human facial image datasets and are thus constrained by their inherent scale and bias. This restricts their application to a limited generatable age range and the inability to handle large age gaps. We propose FADING, a novel approach to address Face Aging via DIffusion-based editiNG. We go beyond existing methods by leveraging the rich prior of large-scale language-image diffusion models. First, we specialize a pre-trained diffusion model for the task of face age editing by using an age-aware fine-tuning scheme. Next, we invert the input image to latent noise and obtain optimized null text embeddings. Finally, we perform text-guided local age editing via attention control. The quantitative and qualitative analyses demonstrate that our method outperforms existing approaches with respect to aging accuracy, attribute preservation, and aging quality.

+

+

+

+ 19. 标题:Uncovering the effects of model initialization on deep model generalization: A study with adult and pediatric Chest X-ray images

+ 编号:[71]

+ 链接:https://arxiv.org/abs/2309.11318

+ 作者:Sivaramakrishnan Rajaraman, Ghada Zamzmi, Feng Yang, Zhaohui Liang, Zhiyun Xue, Sameer Antani

+ 备注:40 pages, 8 tables, 7 figures, 3 supplementary figures and 4 supplementary tables

+ 关键词:computer vision applications, medical computer vision, vision applications, vital for improving, computer vision

+

+ 点击查看摘要

+ Model initialization techniques are vital for improving the performance and reliability of deep learning models in medical computer vision applications. While much literature exists on non-medical images, the impacts on medical images, particularly chest X-rays (CXRs) are less understood. Addressing this gap, our study explores three deep model initialization techniques: Cold-start, Warm-start, and Shrink and Perturb start, focusing on adult and pediatric populations. We specifically focus on scenarios with periodically arriving data for training, thereby embracing the real-world scenarios of ongoing data influx and the need for model updates. We evaluate these models for generalizability against external adult and pediatric CXR datasets. We also propose novel ensemble methods: F-score-weighted Sequential Least-Squares Quadratic Programming (F-SLSQP) and Attention-Guided Ensembles with Learnable Fuzzy Softmax to aggregate weight parameters from multiple models to capitalize on their collective knowledge and complementary representations. We perform statistical significance tests with 95% confidence intervals and p-values to analyze model performance. Our evaluations indicate models initialized with ImageNet-pre-trained weights demonstrate superior generalizability over randomly initialized counterparts, contradicting some findings for non-medical images. Notably, ImageNet-pretrained models exhibit consistent performance during internal and external testing across different training scenarios. Weight-level ensembles of these models show significantly higher recall (p<0.05) during testing compared to individual models. thus, our study accentuates the benefits of imagenet-pretrained weight initialization, especially when used with weight-level ensembles, for creating robust and generalizable deep learning solutions.< p>

+

+

+

+ 20. 标题:FaceDiffuser: Speech-Driven 3D Facial Animation Synthesis Using Diffusion

+ 编号:[77]

+ 链接:https://arxiv.org/abs/2309.11306

+ 作者:Stefan Stan, Kazi Injamamul Haque, Zerrin Yumak

+ 备注:Pre-print of the paper accepted at ACM SIGGRAPH MIG 2023

+ 关键词:industry and research, facial animation synthesis, facial animation, facial, based

+

+ 点击查看摘要

+ Speech-driven 3D facial animation synthesis has been a challenging task both in industry and research. Recent methods mostly focus on deterministic deep learning methods meaning that given a speech input, the output is always the same. However, in reality, the non-verbal facial cues that reside throughout the face are non-deterministic in nature. In addition, majority of the approaches focus on 3D vertex based datasets and methods that are compatible with existing facial animation pipelines with rigged characters is scarce. To eliminate these issues, we present FaceDiffuser, a non-deterministic deep learning model to generate speech-driven facial animations that is trained with both 3D vertex and blendshape based datasets. Our method is based on the diffusion technique and uses the pre-trained large speech representation model HuBERT to encode the audio input. To the best of our knowledge, we are the first to employ the diffusion method for the task of speech-driven 3D facial animation synthesis. We have run extensive objective and subjective analyses and show that our approach achieves better or comparable results in comparison to the state-of-the-art methods. We also introduce a new in-house dataset that is based on a blendshape based rigged character. We recommend watching the accompanying supplementary video. The code and the dataset will be publicly available.

+

+

+

+ 21. 标题:Generalizing Across Domains in Diabetic Retinopathy via Variational Autoencoders

+ 编号:[79]

+ 链接:https://arxiv.org/abs/2309.11301

+ 作者:Sharon Chokuwa, Muhammad H. Khan

+ 备注:Accepted at MICCAI 2023 1st International Workshop on Foundation Models for General Medical AI (MedAGI)

+ 关键词:Diabetic Retinopathy, adeptly classify retinal, previously unseen domains, classify retinal images, patient demographics

+

+ 点击查看摘要

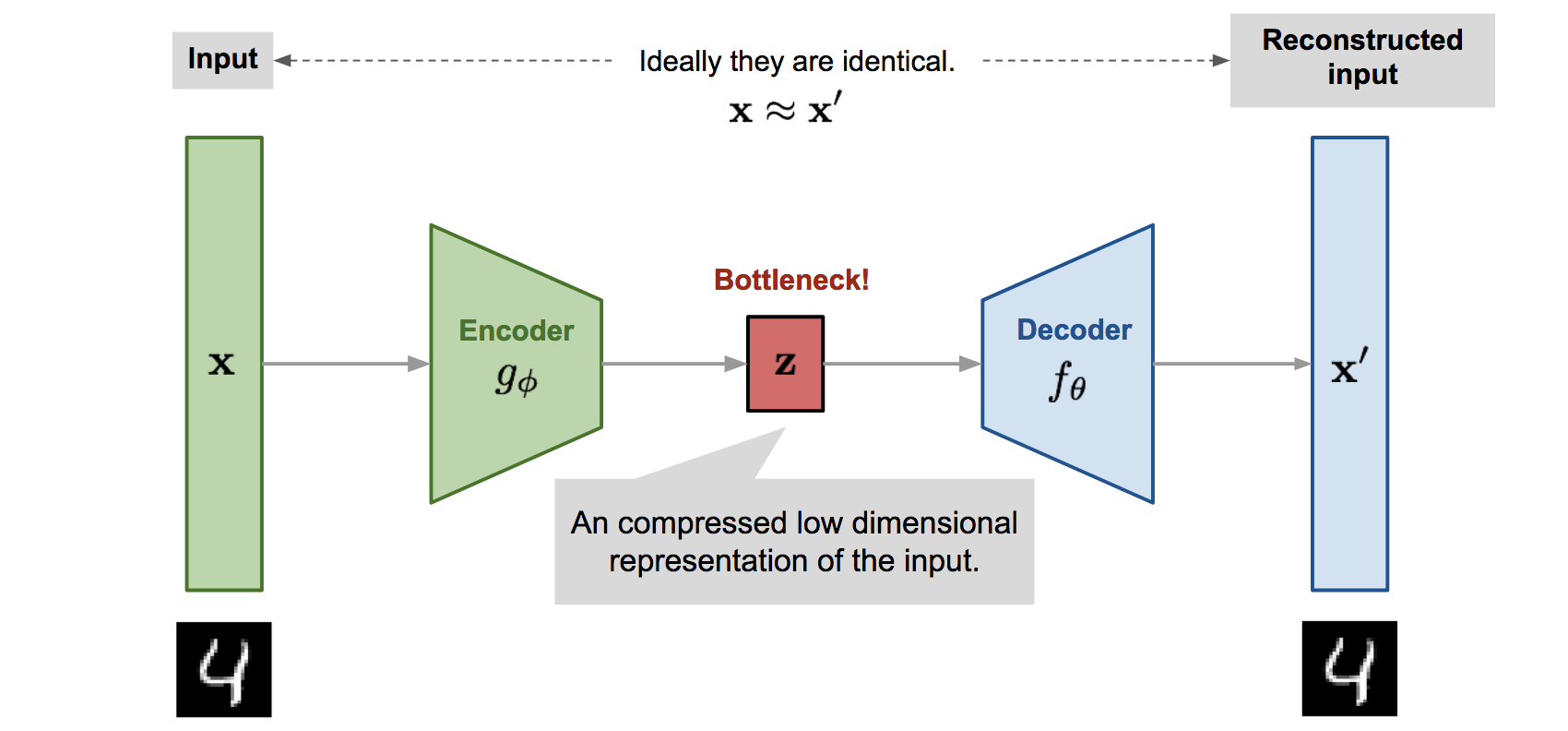

+ Domain generalization for Diabetic Retinopathy (DR) classification allows a model to adeptly classify retinal images from previously unseen domains with various imaging conditions and patient demographics, thereby enhancing its applicability in a wide range of clinical environments. In this study, we explore the inherent capacity of variational autoencoders to disentangle the latent space of fundus images, with an aim to obtain a more robust and adaptable domain-invariant representation that effectively tackles the domain shift encountered in DR datasets. Despite the simplicity of our approach, we explore the efficacy of this classical method and demonstrate its ability to outperform contemporary state-of-the-art approaches for this task using publicly available datasets. Our findings challenge the prevailing assumption that highly sophisticated methods for DR classification are inherently superior for domain generalization. This highlights the importance of considering simple methods and adapting them to the challenging task of generalizing medical images, rather than solely relying on advanced techniques.

+

+

+

+ 22. 标题:Language-driven Object Fusion into Neural Radiance Fields with Pose-Conditioned Dataset Updates

+ 编号:[89]

+ 链接:https://arxiv.org/abs/2309.11281

+ 作者:Ka Chun Shum, Jaeyeon Kim, Binh-Son Hua, Duc Thanh Nguyen, Sai-Kit Yeung

+ 备注:

+ 关键词:radiance field, Neural radiance, Neural radiance field, high-quality multi-view consistent, emerging rendering method

+

+ 点击查看摘要

+ Neural radiance field is an emerging rendering method that generates high-quality multi-view consistent images from a neural scene representation and volume rendering. Although neural radiance field-based techniques are robust for scene reconstruction, their ability to add or remove objects remains limited. This paper proposes a new language-driven approach for object manipulation with neural radiance fields through dataset updates. Specifically, to insert a new foreground object represented by a set of multi-view images into a background radiance field, we use a text-to-image diffusion model to learn and generate combined images that fuse the object of interest into the given background across views. These combined images are then used for refining the background radiance field so that we can render view-consistent images containing both the object and the background. To ensure view consistency, we propose a dataset updates strategy that prioritizes radiance field training with camera views close to the already-trained views prior to propagating the training to remaining views. We show that under the same dataset updates strategy, we can easily adapt our method for object insertion using data from text-to-3D models as well as object removal. Experimental results show that our method generates photorealistic images of the edited scenes, and outperforms state-of-the-art methods in 3D reconstruction and neural radiance field blending.

+

+

+

+ 23. 标题:Towards Real-Time Neural Video Codec for Cross-Platform Application Using Calibration Information

+ 编号:[91]

+ 链接:https://arxiv.org/abs/2309.11276

+ 作者:Kuan Tian, Yonghang Guan, Jinxi Xiang, Jun Zhang, Xiao Han, Wei Yang

+ 备注:14 pages

+ 关键词:sophisticated traditional codecs, decoding, sophisticated traditional, encoding, cross-platform neural video

+

+ 点击查看摘要

+ The state-of-the-art neural video codecs have outperformed the most sophisticated traditional codecs in terms of RD performance in certain cases. However, utilizing them for practical applications is still challenging for two major reasons. 1) Cross-platform computational errors resulting from floating point operations can lead to inaccurate decoding of the bitstream. 2) The high computational complexity of the encoding and decoding process poses a challenge in achieving real-time performance. In this paper, we propose a real-time cross-platform neural video codec, which is capable of efficiently decoding of 720P video bitstream from other encoding platforms on a consumer-grade GPU. First, to solve the problem of inconsistency of codec caused by the uncertainty of floating point calculations across platforms, we design a calibration transmitting system to guarantee the consistent quantization of entropy parameters between the encoding and decoding stages. The parameters that may have transboundary quantization between encoding and decoding are identified in the encoding stage, and their coordinates will be delivered by auxiliary transmitted bitstream. By doing so, these inconsistent parameters can be processed properly in the decoding stage. Furthermore, to reduce the bitrate of the auxiliary bitstream, we rectify the distribution of entropy parameters using a piecewise Gaussian constraint. Second, to match the computational limitations on the decoding side for real-time video codec, we design a lightweight model. A series of efficiency techniques enable our model to achieve 25 FPS decoding speed on NVIDIA RTX 2080 GPU. Experimental results demonstrate that our model can achieve real-time decoding of 720P videos while encoding on another platform. Furthermore, the real-time model brings up to a maximum of 24.2\% BD-rate improvement from the perspective of PSNR with the anchor H.265.

+

+

+

+ 24. 标题:StructChart: Perception, Structuring, Reasoning for Visual Chart Understanding

+ 编号:[96]

+ 链接:https://arxiv.org/abs/2309.11268

+ 作者:Renqiu Xia, Bo Zhang, Haoyang Peng, Ning Liao, Peng Ye, Botian Shi, Junchi Yan, Yu Qiao

+ 备注:21 pages, 11 figures

+ 关键词:conveying rich information, rich information easily, information easily accessible, scientific fields, conveying rich

+

+ 点击查看摘要

+ Charts are common in literature across different scientific fields, conveying rich information easily accessible to readers. Current chart-related tasks focus on either chart perception which refers to extracting information from the visual charts, or performing reasoning given the extracted data, e.g. in a tabular form. In this paper, we aim to establish a unified and label-efficient learning paradigm for joint perception and reasoning tasks, which can be generally applicable to different downstream tasks, beyond the question-answering task as specifically studied in peer works. Specifically, StructChart first reformulates the chart information from the popular tubular form (specifically linearized CSV) to the proposed Structured Triplet Representations (STR), which is more friendly for reducing the task gap between chart perception and reasoning due to the employed structured information extraction for charts. We then propose a Structuring Chart-oriented Representation Metric (SCRM) to quantitatively evaluate the performance for the chart perception task. To enrich the dataset for training, we further explore the possibility of leveraging the Large Language Model (LLM), enhancing the chart diversity in terms of both chart visual style and its statistical information. Extensive experiments are conducted on various chart-related tasks, demonstrating the effectiveness and promising potential for a unified chart perception-reasoning paradigm to push the frontier of chart understanding.

+

+

+

+ 25. 标题:From Classification to Segmentation with Explainable AI: A Study on Crack Detection and Growth Monitoring

+ 编号:[97]

+ 链接:https://arxiv.org/abs/2309.11267

+ 作者:Florent Forest, Hugo Porta, Devis Tuia, Olga Fink

+ 备注:43 pages. Under review

+ 关键词:structural health monitoring, infrastructure is crucial, crucial for structural, structural health, Monitoring

+

+ 点击查看摘要

+ Monitoring surface cracks in infrastructure is crucial for structural health monitoring. Automatic visual inspection offers an effective solution, especially in hard-to-reach areas. Machine learning approaches have proven their effectiveness but typically require large annotated datasets for supervised training. Once a crack is detected, monitoring its severity often demands precise segmentation of the damage. However, pixel-level annotation of images for segmentation is labor-intensive. To mitigate this cost, one can leverage explainable artificial intelligence (XAI) to derive segmentations from the explanations of a classifier, requiring only weak image-level supervision. This paper proposes applying this methodology to segment and monitor surface cracks. We evaluate the performance of various XAI methods and examine how this approach facilitates severity quantification and growth monitoring. Results reveal that while the resulting segmentation masks may exhibit lower quality than those produced by supervised methods, they remain meaningful and enable severity monitoring, thus reducing substantial labeling costs.

+

+

+

+ 26. 标题:TwinTex: Geometry-aware Texture Generation for Abstracted 3D Architectural Models

+ 编号:[99]

+ 链接:https://arxiv.org/abs/2309.11258

+ 作者:Weidan Xiong, Hongqian Zhang, Botao Peng, Ziyu Hu, Yongli Wu, Jianwei Guo, Hui Huang

+ 备注:Accepted to SIGGRAPH ASIA 2023

+ 关键词:Digital Twin City, Coarse architectural models, Twin City, Digital Twin, Coarse architectural

+

+ 点击查看摘要

+ Coarse architectural models are often generated at scales ranging from individual buildings to scenes for downstream applications such as Digital Twin City, Metaverse, LODs, etc. Such piece-wise planar models can be abstracted as twins from 3D dense reconstructions. However, these models typically lack realistic texture relative to the real building or scene, making them unsuitable for vivid display or direct reference. In this paper, we present TwinTex, the first automatic texture mapping framework to generate a photo-realistic texture for a piece-wise planar proxy. Our method addresses most challenges occurring in such twin texture generation. Specifically, for each primitive plane, we first select a small set of photos with greedy heuristics considering photometric quality, perspective quality and facade texture completeness. Then, different levels of line features (LoLs) are extracted from the set of selected photos to generate guidance for later steps. With LoLs, we employ optimization algorithms to align texture with geometry from local to global. Finally, we fine-tune a diffusion model with a multi-mask initialization component and a new dataset to inpaint the missing region. Experimental results on many buildings, indoor scenes and man-made objects of varying complexity demonstrate the generalization ability of our algorithm. Our approach surpasses state-of-the-art texture mapping methods in terms of high-fidelity quality and reaches a human-expert production level with much less effort. Project page: https://vcc.tech/research/2023/TwinTex.

+

+

+

+ 27. 标题:The Scenario Refiner: Grounding subjects in images at the morphological level

+ 编号:[102]

+ 链接:https://arxiv.org/abs/2309.11252

+ 作者:Claudia Tagliaferri, Sofia Axioti, Albert Gatt, Denis Paperno

+ 备注:presented at the LIMO workshop (Linguistic Insights from and for Multimodal Language Processing @KONVENS 2023)

+ 关键词:exhibit semantic differences, Derivationally related words, exhibit semantic, visual scenarios, semantic differences

+

+ 点击查看摘要

+ Derivationally related words, such as "runner" and "running", exhibit semantic differences which also elicit different visual scenarios. In this paper, we ask whether Vision and Language (V\&L) models capture such distinctions at the morphological level, using a a new methodology and dataset. We compare the results from V\&L models to human judgements and find that models' predictions differ from those of human participants, in particular displaying a grammatical bias. We further investigate whether the human-model misalignment is related to model architecture. Our methodology, developed on one specific morphological contrast, can be further extended for testing models on capturing other nuanced language features.

+

+

+

+ 28. 标题:Box2Poly: Memory-Efficient Polygon Prediction of Arbitrarily Shaped and Rotated Text

+ 编号:[104]

+ 链接:https://arxiv.org/abs/2309.11248

+ 作者:Xuyang Chen, Dong Wang, Konrad Schindler, Mingwei Sun, Yongliang Wang, Nicolo Savioli, Liqiu Meng

+ 备注:

+ 关键词:individual boundary vertices, Transformer-based text detection, distinct query features, Transformer-based text, techniques have sought

+

+ 点击查看摘要

+ Recently, Transformer-based text detection techniques have sought to predict polygons by encoding the coordinates of individual boundary vertices using distinct query features. However, this approach incurs a significant memory overhead and struggles to effectively capture the intricate relationships between vertices belonging to the same instance. Consequently, irregular text layouts often lead to the prediction of outlined vertices, diminishing the quality of results. To address these challenges, we present an innovative approach rooted in Sparse R-CNN: a cascade decoding pipeline for polygon prediction. Our method ensures precision by iteratively refining polygon predictions, considering both the scale and location of preceding results. Leveraging this stabilized regression pipeline, even employing just a single feature vector to guide polygon instance regression yields promising detection results. Simultaneously, the leverage of instance-level feature proposal substantially enhances memory efficiency (>50% less vs. the state-of-the-art method DPText-DETR) and reduces inference speed (>40% less vs. DPText-DETR) with minor performance drop on benchmarks.

+

+

+

+ 29. 标题:Towards Robust Few-shot Point Cloud Semantic Segmentation

+ 编号:[116]

+ 链接:https://arxiv.org/abs/2309.11228

+ 作者:Yating Xu, Na Zhao, Gim Hee Lee

+ 备注:BMVC 2023

+ 关键词:semantic segmentation aims, Few-shot point cloud, point cloud semantic, support set, cloud semantic segmentation

+

+ 点击查看摘要

+ Few-shot point cloud semantic segmentation aims to train a model to quickly adapt to new unseen classes with only a handful of support set samples. However, the noise-free assumption in the support set can be easily violated in many practical real-world settings. In this paper, we focus on improving the robustness of few-shot point cloud segmentation under the detrimental influence of noisy support sets during testing time. To this end, we first propose a Component-level Clean Noise Separation (CCNS) representation learning to learn discriminative feature representations that separates the clean samples of the target classes from the noisy samples. Leveraging the well separated clean and noisy support samples from our CCNS, we further propose a Multi-scale Degree-based Noise Suppression (MDNS) scheme to remove the noisy shots from the support set. We conduct extensive experiments on various noise settings on two benchmark datasets. Our results show that the combination of CCNS and MDNS significantly improves the performance. Our code is available at this https URL.

+

+

+

+ 30. 标题:Generalized Few-Shot Point Cloud Segmentation Via Geometric Words

+ 编号:[119]

+ 链接:https://arxiv.org/abs/2309.11222

+ 作者:Yating Xu, Conghui Hu, Na Zhao, Gim Hee Lee

+ 备注:Accepted by ICCV 2023

+ 关键词:point cloud segmentation, dynamic testing environment, Existing fully-supervised point, fully-supervised point cloud, Few-shot point cloud

+

+ 点击查看摘要

+ Existing fully-supervised point cloud segmentation methods suffer in the dynamic testing environment with emerging new classes. Few-shot point cloud segmentation algorithms address this problem by learning to adapt to new classes at the sacrifice of segmentation accuracy for the base classes, which severely impedes its practicality. This largely motivates us to present the first attempt at a more practical paradigm of generalized few-shot point cloud segmentation, which requires the model to generalize to new categories with only a few support point clouds and simultaneously retain the capability to segment base classes. We propose the geometric words to represent geometric components shared between the base and novel classes, and incorporate them into a novel geometric-aware semantic representation to facilitate better generalization to the new classes without forgetting the old ones. Moreover, we introduce geometric prototypes to guide the segmentation with geometric prior knowledge. Extensive experiments on S3DIS and ScanNet consistently illustrate the superior performance of our method over baseline methods. Our code is available at: this https URL.

+

+

+

+ 31. 标题:Automatic Bat Call Classification using Transformer Networks

+ 编号:[120]

+ 链接:https://arxiv.org/abs/2309.11218

+ 作者:Frank Fundel, Daniel A. Braun, Sebastian Gottwald

+ 备注:Volume 78, December 2023, 102288

+ 关键词:Automatically identifying bat, Automatically identifying, difficult but important, important task, task for monitoring

+

+ 点击查看摘要

+ Automatically identifying bat species from their echolocation calls is a difficult but important task for monitoring bats and the ecosystem they live in. Major challenges in automatic bat call identification are high call variability, similarities between species, interfering calls and lack of annotated data. Many currently available models suffer from relatively poor performance on real-life data due to being trained on single call datasets and, moreover, are often too slow for real-time classification. Here, we propose a Transformer architecture for multi-label classification with potential applications in real-time classification scenarios. We train our model on synthetically generated multi-species recordings by merging multiple bats calls into a single recording with multiple simultaneous calls. Our approach achieves a single species accuracy of 88.92% (F1-score of 84.23%) and a multi species macro F1-score of 74.40% on our test set. In comparison to three other tools on the independent and publicly available dataset ChiroVox, our model achieves at least 25.82% better accuracy for single species classification and at least 6.9% better macro F1-score for multi species classification.

+

+

+

+ 32. 标题:Partition-A-Medical-Image: Extracting Multiple Representative Sub-regions for Few-shot Medical Image Segmentation

+ 编号:[132]

+ 链接:https://arxiv.org/abs/2309.11172

+ 作者:Yazhou Zhu, Shidong Wang, Tong Xin, Zheng Zhang, Haofeng Zhang

+ 备注:

+ 关键词:Medical Image Segmentation, image segmentation tasks, Image Segmentation, segmentation tasks, Few-shot Medical Image

+

+ 点击查看摘要

+ Few-shot Medical Image Segmentation (FSMIS) is a more promising solution for medical image segmentation tasks where high-quality annotations are naturally scarce. However, current mainstream methods primarily focus on extracting holistic representations from support images with large intra-class variations in appearance and background, and encounter difficulties in adapting to query images. In this work, we present an approach to extract multiple representative sub-regions from a given support medical image, enabling fine-grained selection over the generated image regions. Specifically, the foreground of the support image is decomposed into distinct regions, which are subsequently used to derive region-level representations via a designed Regional Prototypical Learning (RPL) module. We then introduce a novel Prototypical Representation Debiasing (PRD) module based on a two-way elimination mechanism which suppresses the disturbance of regional representations by a self-support, Multi-direction Self-debiasing (MS) block, and a support-query, Interactive Debiasing (ID) block. Finally, an Assembled Prediction (AP) module is devised to balance and integrate predictions of multiple prototypical representations learned using stacked PRD modules. Results obtained through extensive experiments on three publicly accessible medical imaging datasets demonstrate consistent improvements over the leading FSMIS methods. The source code is available at this https URL.

+

+

+

+ 33. 标题:AutoSynth: Learning to Generate 3D Training Data for Object Point Cloud Registration

+ 编号:[133]

+ 链接:https://arxiv.org/abs/2309.11170

+ 作者:Zheng Dang, Mathieu Salzmann

+ 备注:accepted by ICCV2023

+ 关键词:deep learning paradigm, current deep learning, point cloud registration, learning paradigm, current deep

+

+ 点击查看摘要

+ In the current deep learning paradigm, the amount and quality of training data are as critical as the network architecture and its training details. However, collecting, processing, and annotating real data at scale is difficult, expensive, and time-consuming, particularly for tasks such as 3D object registration. While synthetic datasets can be created, they require expertise to design and include a limited number of categories. In this paper, we introduce a new approach called AutoSynth, which automatically generates 3D training data for point cloud registration. Specifically, AutoSynth automatically curates an optimal dataset by exploring a search space encompassing millions of potential datasets with diverse 3D shapes at a low this http URL achieve this, we generate synthetic 3D datasets by assembling shape primitives, and develop a meta-learning strategy to search for the best training data for 3D registration on real point clouds. For this search to remain tractable, we replace the point cloud registration network with a much smaller surrogate network, leading to a $4056.43$ times speedup. We demonstrate the generality of our approach by implementing it with two different point cloud registration networks, BPNet and IDAM. Our results on TUD-L, LINEMOD and Occluded-LINEMOD evidence that a neural network trained on our searched dataset yields consistently better performance than the same one trained on the widely used ModelNet40 dataset.

+

+

+

+ 34. 标题:Multi-grained Temporal Prototype Learning for Few-shot Video Object Segmentation

+ 编号:[137]

+ 链接:https://arxiv.org/abs/2309.11160

+ 作者:Nian Liu, Kepan Nan, Wangbo Zhao, Yuanwei Liu, Xiwen Yao, Salman Khan, Hisham Cholakkal, Rao Muhammad Anwer, Junwei Han, Fahad Shahbaz Khan

+ 备注:ICCV 2023

+ 关键词:Few-Shot Video Object, Video Object Segmentation, segment objects, Object Segmentation, aims to segment

+

+ 点击查看摘要

+ Few-Shot Video Object Segmentation (FSVOS) aims to segment objects in a query video with the same category defined by a few annotated support images. However, this task was seldom explored. In this work, based on IPMT, a state-of-the-art few-shot image segmentation method that combines external support guidance information with adaptive query guidance cues, we propose to leverage multi-grained temporal guidance information for handling the temporal correlation nature of video data. We decompose the query video information into a clip prototype and a memory prototype for capturing local and long-term internal temporal guidance, respectively. Frame prototypes are further used for each frame independently to handle fine-grained adaptive guidance and enable bidirectional clip-frame prototype communication. To reduce the influence of noisy memory, we propose to leverage the structural similarity relation among different predicted regions and the support for selecting reliable memory frames. Furthermore, a new segmentation loss is also proposed to enhance the category discriminability of the learned prototypes. Experimental results demonstrate that our proposed video IPMT model significantly outperforms previous models on two benchmark datasets. Code is available at this https URL.

+

+

+

+ 35. 标题:Learning Deformable 3D Graph Similarity to Track Plant Cells in Unregistered Time Lapse Images

+ 编号:[138]

+ 链接:https://arxiv.org/abs/2309.11157

+ 作者:Md Shazid Islam, Arindam Dutta, Calvin-Khang Ta, Kevin Rodriguez, Christian Michael, Mark Alber, G. Venugopala Reddy, Amit K. Roy-Chowdhury

+ 备注:

+ 关键词:challenging problem due, non-uniform growth, tightly packed plant, obtained by microscope, due to biological

+

+ 点击查看摘要

+ Tracking of plant cells in images obtained by microscope is a challenging problem due to biological phenomena such as large number of cells, non-uniform growth of different layers of the tightly packed plant cells and cell division. Moreover, images in deeper layers of the tissue being noisy and unavoidable systemic errors inherent in the imaging process further complicates the problem. In this paper, we propose a novel learning-based method that exploits the tightly packed three-dimensional cell structure of plant cells to create a three-dimensional graph in order to perform accurate cell tracking. We further propose novel algorithms for cell division detection and effective three-dimensional registration, which improve upon the state-of-the-art algorithms. We demonstrate the efficacy of our algorithm in terms of tracking accuracy and inference-time on a benchmark dataset.

+

+

+

+ 36. 标题:CNN-based local features for navigation near an asteroid

+ 编号:[139]

+ 链接:https://arxiv.org/abs/2309.11156

+ 作者:Olli Knuuttila, Antti Kestilä, Esa Kallio

+ 备注:

+ 关键词:on-orbit servicing, vision-based proximity navigation, article addresses, addresses the challenge, challenge of vision-based

+

+ 点击查看摘要

+ This article addresses the challenge of vision-based proximity navigation in asteroid exploration missions and on-orbit servicing. Traditional feature extraction methods struggle with the significant appearance variations of asteroids due to limited scattered light. To overcome this, we propose a lightweight feature extractor specifically tailored for asteroid proximity navigation, designed to be robust to illumination changes and affine transformations. We compare and evaluate state-of-the-art feature extraction networks and three lightweight network architectures in the asteroid context. Our proposed feature extractors and their evaluation leverages both synthetic images and real-world data from missions such as NEAR Shoemaker, Hayabusa, Rosetta, and OSIRIS-REx. Our contributions include a trained feature extractor, incremental improvements over existing methods, and a pipeline for training domain-specific feature extractors. Experimental results demonstrate the effectiveness of our approach in achieving accurate navigation and localization. This work aims to advance the field of asteroid navigation and provides insights for future research in this domain.

+

+

+

+ 37. 标题:Online Calibration of a Single-Track Ground Vehicle Dynamics Model by Tight Fusion with Visual-Inertial Odometry

+ 编号:[142]

+ 链接:https://arxiv.org/abs/2309.11148

+ 作者:Haolong Li, Joerg Stueckler

+ 备注:Submitted to ICRA 2024

+ 关键词:Wheeled mobile robots, navigation planning, mobile robots, ability to estimate, actions for navigation

+

+ 点击查看摘要

+ Wheeled mobile robots need the ability to estimate their motion and the effect of their control actions for navigation planning. In this paper, we present ST-VIO, a novel approach which tightly fuses a single-track dynamics model for wheeled ground vehicles with visual inertial odometry. Our method calibrates and adapts the dynamics model online and facilitates accurate forward prediction conditioned on future control inputs. The single-track dynamics model approximates wheeled vehicle motion under specific control inputs on flat ground using ordinary differential equations. We use a singularity-free and differentiable variant of the single-track model to enable seamless integration as dynamics factor into VIO and to optimize the model parameters online together with the VIO state variables. We validate our method with real-world data in both indoor and outdoor environments with different terrain types and wheels. In our experiments, we demonstrate that our ST-VIO can not only adapt to the change of the environments and achieve accurate prediction under new control inputs, but even improves the tracking accuracy. Supplementary video: this https URL.

+

+

+

+ 38. 标题:GraphEcho: Graph-Driven Unsupervised Domain Adaptation for Echocardiogram Video Segmentation

+ 编号:[144]

+ 链接:https://arxiv.org/abs/2309.11145

+ 作者:Jiewen Yang, Xinpeng Ding, Ziyang Zheng, Xiaowei Xu, Xiaomeng Li

+ 备注:Accepted By ICCV 2023

+ 关键词:cardiac disease diagnosis, UDA segmentation methods, Echocardiogram video segmentation, UDA segmentation, video segmentation plays

+

+ 点击查看摘要

+ Echocardiogram video segmentation plays an important role in cardiac disease diagnosis. This paper studies the unsupervised domain adaption (UDA) for echocardiogram video segmentation, where the goal is to generalize the model trained on the source domain to other unlabelled target domains. Existing UDA segmentation methods are not suitable for this task because they do not model local information and the cyclical consistency of heartbeat. In this paper, we introduce a newly collected CardiacUDA dataset and a novel GraphEcho method for cardiac structure segmentation. Our GraphEcho comprises two innovative modules, the Spatial-wise Cross-domain Graph Matching (SCGM) and the Temporal Cycle Consistency (TCC) module, which utilize prior knowledge of echocardiogram videos, i.e., consistent cardiac structure across patients and centers and the heartbeat cyclical consistency, respectively. These two modules can better align global and local features from source and target domains, improving UDA segmentation results. Experimental results showed that our GraphEcho outperforms existing state-of-the-art UDA segmentation methods. Our collected dataset and code will be publicly released upon acceptance. This work will lay a new and solid cornerstone for cardiac structure segmentation from echocardiogram videos. Code and dataset are available at: this https URL

+

+

+

+ 39. 标题:GL-Fusion: Global-Local Fusion Network for Multi-view Echocardiogram Video Segmentation

+ 编号:[145]

+ 链接:https://arxiv.org/abs/2309.11144

+ 作者:Ziyang Zheng, Jiewen Yang, Xinpeng Ding, Xiaowei Xu, Xiaomeng Li

+ 备注:Accepted By MICCAI 2023

+ 关键词:diagnosing heart disease, heart disease, Global-based Fusion Module, plays a crucial, crucial role

+

+ 点击查看摘要

+ Cardiac structure segmentation from echocardiogram videos plays a crucial role in diagnosing heart disease. The combination of multi-view echocardiogram data is essential to enhance the accuracy and robustness of automated methods. However, due to the visual disparity of the data, deriving cross-view context information remains a challenging task, and unsophisticated fusion strategies can even lower performance. In this study, we propose a novel Gobal-Local fusion (GL-Fusion) network to jointly utilize multi-view information globally and locally that improve the accuracy of echocardiogram analysis. Specifically, a Multi-view Global-based Fusion Module (MGFM) is proposed to extract global context information and to explore the cyclic relationship of different heartbeat cycles in an echocardiogram video. Additionally, a Multi-view Local-based Fusion Module (MLFM) is designed to extract correlations of cardiac structures from different views. Furthermore, we collect a multi-view echocardiogram video dataset (MvEVD) to evaluate our method. Our method achieves an 82.29% average dice score, which demonstrates a 7.83% improvement over the baseline method, and outperforms other existing state-of-the-art methods. To our knowledge, this is the first exploration of a multi-view method for echocardiogram video segmentation. Code available at: this https URL

+

+

+

+ 40. 标题:Shape Anchor Guided Holistic Indoor Scene Understanding

+ 编号:[151]

+ 链接:https://arxiv.org/abs/2309.11133

+ 作者:Mingyue Dong, Linxi Huan, Hanjiang Xiong, Shuhan Shen, Xianwei Zheng

+ 备注:

+ 关键词:robust holistic indoor, guided learning strategy, indoor scene understanding, holistic indoor scene, paper proposes

+

+ 点击查看摘要

+ This paper proposes a shape anchor guided learning strategy (AncLearn) for robust holistic indoor scene understanding. We observe that the search space constructed by current methods for proposal feature grouping and instance point sampling often introduces massive noise to instance detection and mesh reconstruction. Accordingly, we develop AncLearn to generate anchors that dynamically fit instance surfaces to (i) unmix noise and target-related features for offering reliable proposals at the detection stage, and (ii) reduce outliers in object point sampling for directly providing well-structured geometry priors without segmentation during reconstruction. We embed AncLearn into a reconstruction-from-detection learning system (AncRec) to generate high-quality semantic scene models in a purely instance-oriented manner. Experiments conducted on the challenging ScanNetv2 dataset demonstrate that our shape anchor-based method consistently achieves state-of-the-art performance in terms of 3D object detection, layout estimation, and shape reconstruction. The code will be available at this https URL.

+

+

+

+ 41. 标题:Contrastive Pseudo Learning for Open-World DeepFake Attribution

+ 编号:[152]

+ 链接:https://arxiv.org/abs/2309.11132

+ 作者:Zhimin Sun, Shen Chen, Taiping Yao, Bangjie Yin, Ran Yi, Shouhong Ding, Lizhuang Ma

+ 备注:16 pages, 7 figures, ICCV 2023

+ 关键词:gained widespread attention, widespread attention due, challenge in sourcing, gained widespread, widespread attention

+

+ 点击查看摘要

+ The challenge in sourcing attribution for forgery faces has gained widespread attention due to the rapid development of generative techniques. While many recent works have taken essential steps on GAN-generated faces, more threatening attacks related to identity swapping or expression transferring are still overlooked. And the forgery traces hidden in unknown attacks from the open-world unlabeled faces still remain under-explored. To push the related frontier research, we introduce a new benchmark called Open-World DeepFake Attribution (OW-DFA), which aims to evaluate attribution performance against various types of fake faces under open-world scenarios. Meanwhile, we propose a novel framework named Contrastive Pseudo Learning (CPL) for the OW-DFA task through 1) introducing a Global-Local Voting module to guide the feature alignment of forged faces with different manipulated regions, 2) designing a Confidence-based Soft Pseudo-label strategy to mitigate the pseudo-noise caused by similar methods in unlabeled set. In addition, we extend the CPL framework with a multi-stage paradigm that leverages pre-train technique and iterative learning to further enhance traceability performance. Extensive experiments verify the superiority of our proposed method on the OW-DFA and also demonstrate the interpretability of deepfake attribution task and its impact on improving the security of deepfake detection area.

+

+

+

+ 42. 标题:Locate and Verify: A Two-Stream Network for Improved Deepfake Detection

+ 编号:[153]

+ 链接:https://arxiv.org/abs/2309.11131

+ 作者:Chao Shuai, Jieming Zhong, Shuang Wu, Feng Lin, Zhibo Wang, Zhongjie Ba, Zhenguang Liu, Lorenzo Cavallaro, Kui Ren

+ 备注:10 pages, 8 figures, 60 references. This paper has been accepted for ACM MM 2023

+ 关键词:world by storm, triggering a trust, trust crisis, Current deepfake detection, deepfake detection

+

+ 点击查看摘要

+ Deepfake has taken the world by storm, triggering a trust crisis. Current deepfake detection methods are typically inadequate in generalizability, with a tendency to overfit to image contents such as the background, which are frequently occurring but relatively unimportant in the training dataset. Furthermore, current methods heavily rely on a few dominant forgery regions and may ignore other equally important regions, leading to inadequate uncovering of forgery cues. In this paper, we strive to address these shortcomings from three aspects: (1) We propose an innovative two-stream network that effectively enlarges the potential regions from which the model extracts forgery evidence. (2) We devise three functional modules to handle the multi-stream and multi-scale features in a collaborative learning scheme. (3) Confronted with the challenge of obtaining forgery annotations, we propose a Semi-supervised Patch Similarity Learning strategy to estimate patch-level forged location annotations. Empirically, our method demonstrates significantly improved robustness and generalizability, outperforming previous methods on six benchmarks, and improving the frame-level AUC on Deepfake Detection Challenge preview dataset from 0.797 to 0.835 and video-level AUC on CelebDF$\_$v1 dataset from 0.811 to 0.847. Our implementation is available at this https URL.

+

+

+

+ 43. 标题:PSDiff: Diffusion Model for Person Search with Iterative and Collaborative Refinement

+ 编号:[154]

+ 链接:https://arxiv.org/abs/2309.11125

+ 作者:Chengyou Jia, Minnan Luo, Zhuohang Dang, Guang Dai, Xiaojun Chang, Jingdong Wang, Qinghua Zheng

+ 备注:

+ 关键词:recognize query persons, Dominant Person Search, Search methods aim, Person Search, unified network

+

+ 点击查看摘要

+ Dominant Person Search methods aim to localize and recognize query persons in a unified network, which jointly optimizes two sub-tasks, \ie, detection and Re-IDentification (ReID). Despite significant progress, two major challenges remain: 1) Detection-prior modules in previous methods are suboptimal for the ReID task. 2) The collaboration between two sub-tasks is ignored. To alleviate these issues, we present a novel Person Search framework based on the Diffusion model, PSDiff. PSDiff formulates the person search as a dual denoising process from noisy boxes and ReID embeddings to ground truths. Unlike existing methods that follow the Detection-to-ReID paradigm, our denoising paradigm eliminates detection-prior modules to avoid the local-optimum of the ReID task. Following the new paradigm, we further design a new Collaborative Denoising Layer (CDL) to optimize detection and ReID sub-tasks in an iterative and collaborative way, which makes two sub-tasks mutually beneficial. Extensive experiments on the standard benchmarks show that PSDiff achieves state-of-the-art performance with fewer parameters and elastic computing overhead.

+

+

+

+ 44. 标题:Hyperspectral Benchmark: Bridging the Gap between HSI Applications through Comprehensive Dataset and Pretraining

+ 编号:[156]

+ 链接:https://arxiv.org/abs/2309.11122

+ 作者:Hannah Frank, Leon Amadeus Varga, Andreas Zell

+ 备注:Hannah Frankand Leon Amadeus Varga contributed equally

+ 关键词:non-destructive spatial spectroscopy, spatial spectroscopy technique, Hyperspectral Imaging, non-destructive spatial, spatial spectroscopy

+

+ 点击查看摘要

+ Hyperspectral Imaging (HSI) serves as a non-destructive spatial spectroscopy technique with a multitude of potential applications. However, a recurring challenge lies in the limited size of the target datasets, impeding exhaustive architecture search. Consequently, when venturing into novel applications, reliance on established methodologies becomes commonplace, in the hope that they exhibit favorable generalization characteristics. Regrettably, this optimism is often unfounded due to the fine-tuned nature of models tailored to specific HSI contexts.

+To address this predicament, this study introduces an innovative benchmark dataset encompassing three markedly distinct HSI applications: food inspection, remote sensing, and recycling. This comprehensive dataset affords a finer assessment of hyperspectral model capabilities. Moreover, this benchmark facilitates an incisive examination of prevailing state-of-the-art techniques, consequently fostering the evolution of superior methodologies.

+Furthermore, the enhanced diversity inherent in the benchmark dataset underpins the establishment of a pretraining pipeline for HSI. This pretraining regimen serves to enhance the stability of training processes for larger models. Additionally, a procedural framework is delineated, offering insights into the handling of applications afflicted by limited target dataset sizes.

+

+

+

+ 45. 标题:BroadBEV: Collaborative LiDAR-camera Fusion for Broad-sighted Bird's Eye View Map Construction

+ 编号:[157]

+ 链接:https://arxiv.org/abs/2309.11119

+ 作者:Minsu Kim, Giseop Kim, Kyong Hwan Jin, Sunwook Choi

+ 备注:

+ 关键词:Bird Eye View, Eye View, Bird Eye, BEV, recent sensor fusion

+

+ 点击查看摘要

+ A recent sensor fusion in a Bird's Eye View (BEV) space has shown its utility in various tasks such as 3D detection, map segmentation, etc. However, the approach struggles with inaccurate camera BEV estimation, and a perception of distant areas due to the sparsity of LiDAR points. In this paper, we propose a broad BEV fusion (BroadBEV) that addresses the problems with a spatial synchronization approach of cross-modality. Our strategy aims to enhance camera BEV estimation for a broad-sighted perception while simultaneously improving the completion of LiDAR's sparsity in the entire BEV space. Toward that end, we devise Point-scattering that scatters LiDAR BEV distribution to camera depth distribution. The method boosts the learning of depth estimation of the camera branch and induces accurate location of dense camera features in BEV space. For an effective BEV fusion between the spatially synchronized features, we suggest ColFusion that applies self-attention weights of LiDAR and camera BEV features to each other. Our extensive experiments demonstrate that BroadBEV provides a broad-sighted BEV perception with remarkable performance gains.

+

+

+

+ 46. 标题:PRAT: PRofiling Adversarial aTtacks

+ 编号:[159]

+ 链接:https://arxiv.org/abs/2309.11111

+ 作者:Rahul Ambati, Naveed Akhtar, Ajmal Mian, Yogesh Singh Rawat

+ 备注:

+ 关键词:fooling deep models, broad common objective, deep models, deep learning, fooling deep

+

+ 点击查看摘要

+ Intrinsic susceptibility of deep learning to adversarial examples has led to a plethora of attack techniques with a broad common objective of fooling deep models. However, we find slight compositional differences between the algorithms achieving this objective. These differences leave traces that provide important clues for attacker profiling in real-life scenarios. Inspired by this, we introduce a novel problem of PRofiling Adversarial aTtacks (PRAT). Given an adversarial example, the objective of PRAT is to identify the attack used to generate it. Under this perspective, we can systematically group existing attacks into different families, leading to the sub-problem of attack family identification, which we also study. To enable PRAT analysis, we introduce a large Adversarial Identification Dataset (AID), comprising over 180k adversarial samples generated with 13 popular attacks for image specific/agnostic white/black box setups. We use AID to devise a novel framework for the PRAT objective. Our framework utilizes a Transformer based Global-LOcal Feature (GLOF) module to extract an approximate signature of the adversarial attack, which in turn is used for the identification of the attack. Using AID and our framework, we provide multiple interesting benchmark results for the PRAT problem.

+

+

+

+ 47. 标题:Self-supervised Domain-agnostic Domain Adaptation for Satellite Images

+ 编号:[160]

+ 链接:https://arxiv.org/abs/2309.11109

+ 作者:Fahong Zhang, Yilei Shi, Xiao Xiang Zhu

+ 备注:

+ 关键词:Domain shift caused, global scale satellite, satellite image processing, scale satellite image, shift caused

+

+ 点击查看摘要